Documentation Index

Fetch the complete documentation index at: https://docs.getbifrost.ai/llms.txt

Use this file to discover all available pages before exploring further.

Overview

Vertex AI is Google’s unified ML platform providing access to Google’s Gemini models, Anthropic Claude models, and other third-party LLMs through a single API. Bifrost performs conversions including:- Multi-model support - Unified interface for Gemini, Anthropic, and third-party models

- OAuth2 authentication - Service account credentials with automatic token refresh

- Project and region management - Automatic endpoint construction from GCP project/region

- Model routing - Automatic provider detection (Gemini vs Anthropic) based on model name

- Request conversion - Conversion to underlying provider format (Gemini or Anthropic)

- Embeddings support - Vector generation with task type and truncation options

- Model discovery - Paginated model listing with deployment information

Supported Operations

| Operation | Non-Streaming | Streaming | Endpoint |

|---|---|---|---|

| Chat Completions | ✅ | ✅ | /generate |

| Responses API | ✅ | ✅ | /messages |

| Embeddings | ✅ | - | /embeddings |

| Image Generation | ✅ | - | /generateContent or /predict (Imagen) |

| Image Edit | ✅ | - | /generateContent or /predict (Imagen) |

| Video Generation | ✅ | - | /predictLongRunning (Veo models only) |

| Image Variation | ❌ | - | Not supported |

| List Models | ✅ | - | /models |

| Text Completions | ❌ | ❌ | - |

| Speech (TTS) | ❌ | ❌ | - |

| Transcriptions (STT) | ❌ | ❌ | - |

| Files | ❌ | ❌ | - |

| Batch | ❌ | ❌ | - |

Unsupported Operations (❌): Text Completions, Speech, Transcriptions, Files, and Batch are not supported by Vertex AI. These return

UnsupportedOperationError.Vertex-specific: Endpoints vary by model type. Responses API available for both Gemini and Anthropic models.Setup & Configuration

Vertex AI requires Google Cloud project configuration and authentication credentials. Three authentication methods are supported.The

aliases field (mapping model names to fine-tuned model IDs or endpoint

identifiers) requires v1.5.0-prerelease2 or later. On v1.4.x, use

deployments inside vertex_key_config instead - see the v1.5.0 Migration

Guide

for details.1. Service Account JSON (Recommended for Production)

Provide a credential JSON string inauth_credentials. The JSON must contain a type field. Supported types: service_account (most common), impersonated_service_account, authorized_user, external_account, external_account_authorized_user.

- Web UI

- API

- config.json

- Go SDK

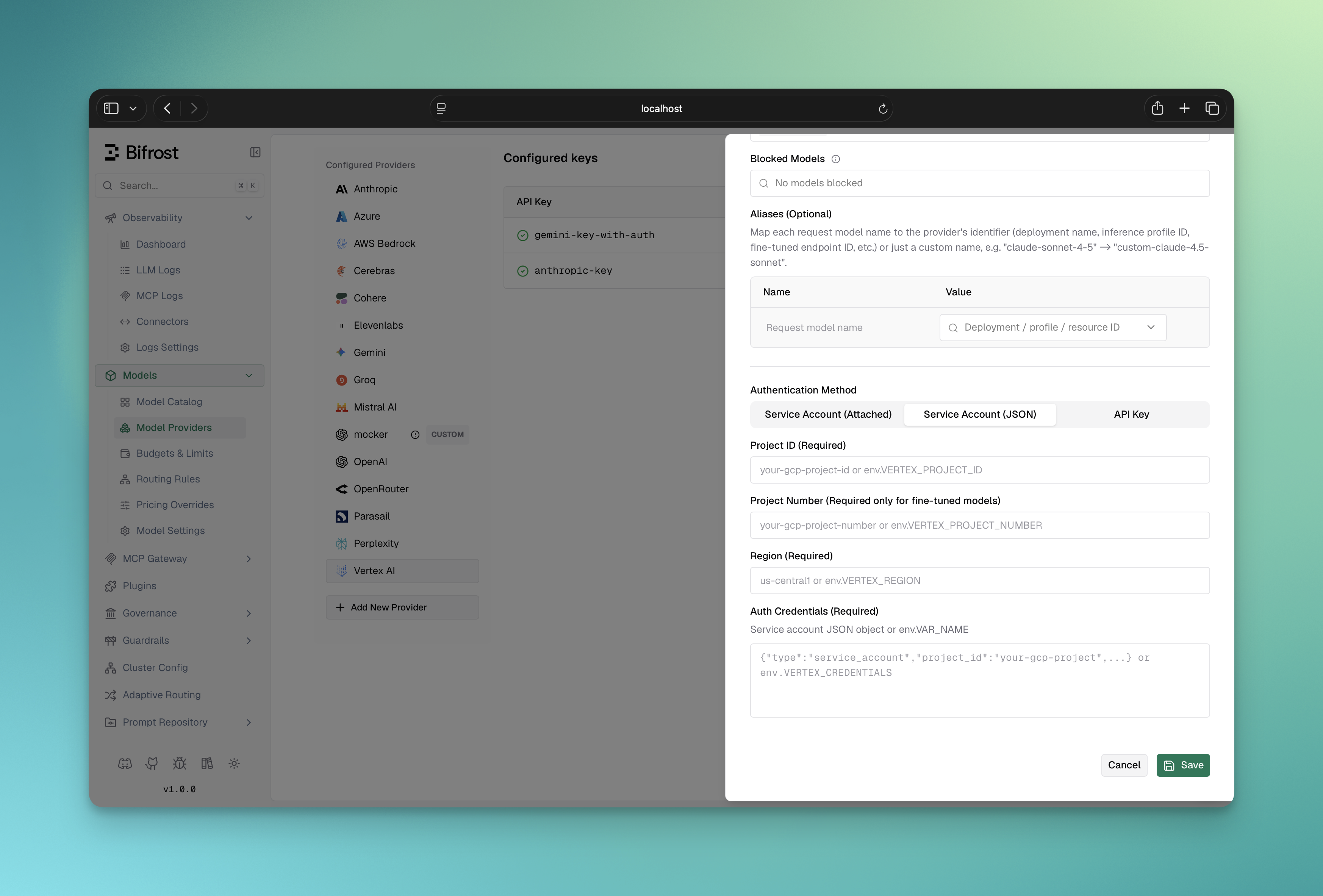

- Navigate to “Model Providers” → “Configurations” → “Google Vertex”

- Click “Add Key” (or edit an existing key)

- Under Authentication Method, select “Service Account (JSON)”

- Set Project ID: Your Google Cloud project ID

- Set Project Number (Required only for fine-tuned models): Your GCP project number; leave blank for standard models

- Set Region: e.g.,

us-central1 - Set Auth Credentials: Paste your service account JSON or reference an env var (e.g.,

env.VERTEX_CREDENTIALS) - Configure Aliases: Map model names to fine-tuned model IDs (if using fine-tuned models)

- Save

2. Application Default Credentials

Leaveauth_credentials empty. Bifrost calls google.FindDefaultCredentials() - Google’s ADC library - which resolves credentials in this order:

GOOGLE_APPLICATION_CREDENTIALSenv var (path to a JSON credential file)- Application default credential file (

~/.config/gcloud/application_default_credentials.json, written bygcloud auth application-default login) - GCE/GKE/Cloud Run/App Engine metadata server (attached service account or Workload Identity)

- Web UI

- API

- config.json

- Go SDK

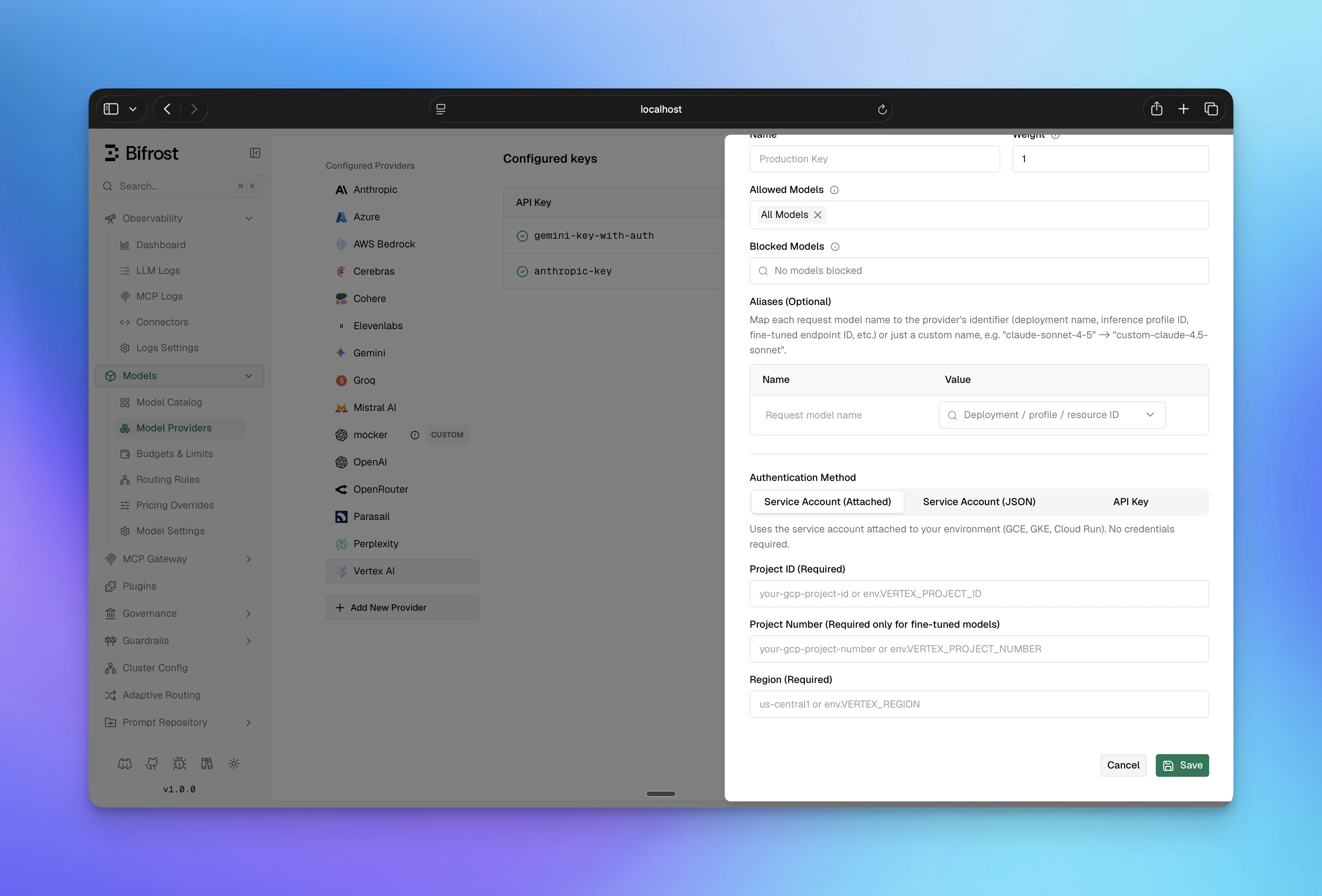

- Navigate to “Model Providers” → “Configurations” → “Google Vertex”

- Click “Add Key” (or edit an existing key)

- Under Authentication Method, select “Service Account (Attached)”

- Set Project ID: Your Google Cloud project ID

- Set Project Number (Required only for fine-tuned models): Your GCP project number; leave blank for standard models

- Set Region: e.g.,

us-central1 - Configure Aliases if needed

- Save

GOOGLE_APPLICATION_CREDENTIALS is set in your environment, or that Workload Identity / gcloud is configured.3. API Key (Gemini and Fine-Tuned Models Only)

Setvalue to your Vertex API key. API key authentication is supported only for Gemini models and fine-tuned Gemini models. For Anthropic models on Vertex, use Service Account or Application Default Credentials.

- Web UI

- API

- config.json

- Go SDK

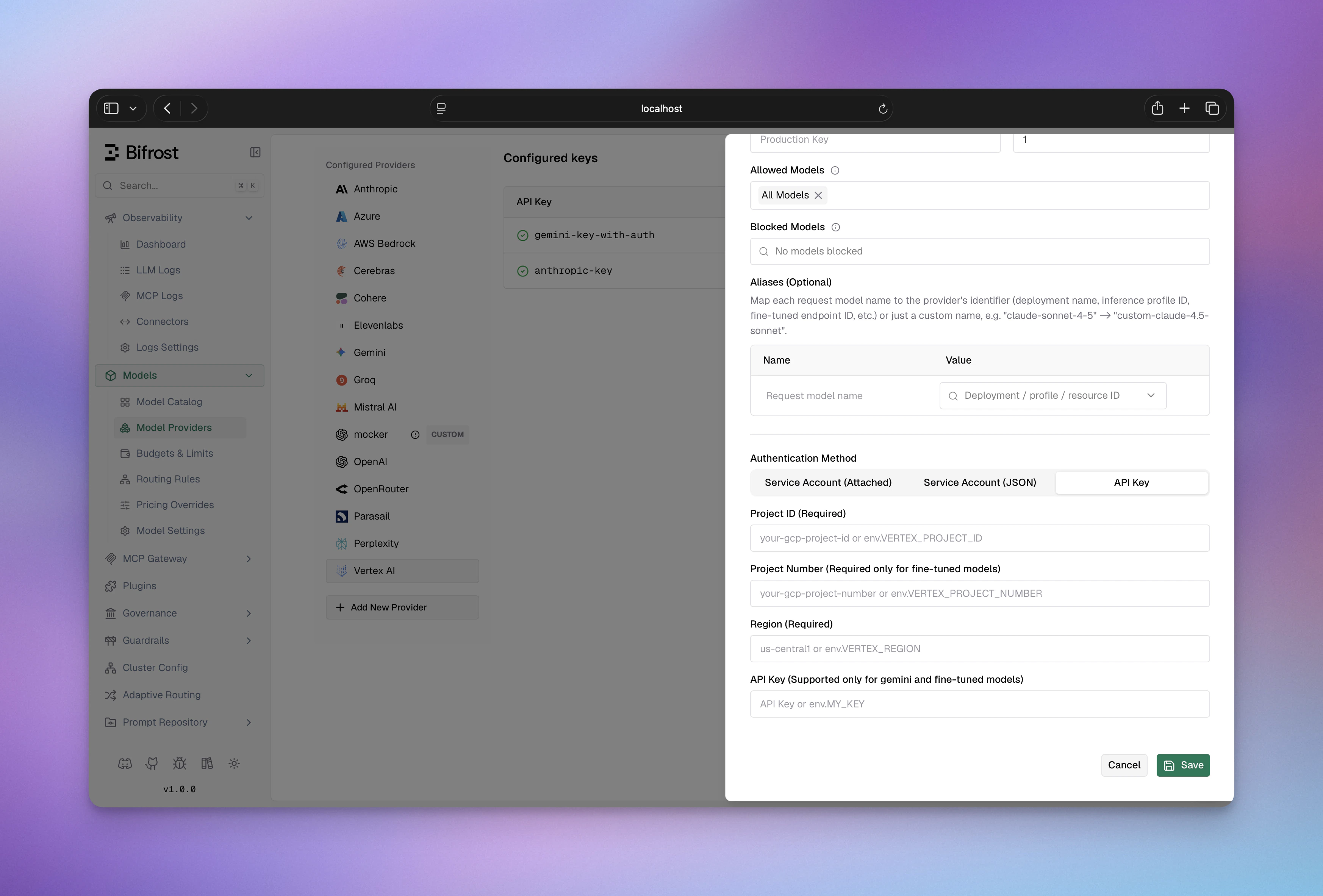

- Navigate to “Model Providers” → “Configurations” → “Google Vertex”

- Click “Add Key” (or edit an existing key)

- Under Authentication Method, select “API Key”

- Set API Key: Your Vertex AI API key

- Set Project ID: Your Google Cloud project ID

- Set Project Number (Required only for fine-tuned models): Your GCP project number; leave blank for standard models

- Set Region: e.g.,

us-central1 - Configure Aliases: Map short names to fine-tuned model IDs (e.g.,

my-model→123456789) - Save

Vertex AI support for fine-tuned models is currently in beta. Requests to

non-Gemini fine-tuned models may fail, so please test and report any issues.

vertex_key_config fields:

| Field | Required | Description |

|---|---|---|

project_id | Yes | Google Cloud project ID |

region | Yes | GCP region (e.g., us-central1, eu-west1, global) |

auth_credentials | No | Service account JSON string (leave empty for ADC) |

project_number | No | GCP project number (required for fine-tuned models) |

| Field | Required | Description |

|---|---|---|

value | No | Vertex API key (Gemini and fine-tuned models only; leave empty for Service Account / ADC) |

aliases | No | Map model names to fine-tuned model IDs or endpoint identifiers (v1.5.0-prerelease2+) |

models | Yes | Models this key can serve; use ["*"] to allow all |

GKE Workload Identity Federation

When running Bifrost on GKE, Workload Identity Federation (WIF) lets pods authenticate to Vertex AI without managing service account keys. The pod inherits an IAM identity through the Kubernetes ServiceAccount, and Bifrost picks it up automatically via Application Default Credentials. What you need:- The GCP-side prerequisites below (API enabled, IAM service account, WIF binding)

- A Bifrost Vertex key using “Service Account (Attached)” auth - see Application Default Credentials for Web UI, API, config.json, and Go SDK setup. For Helm, see Helm - Google Vertex AI.

- The Kubernetes ServiceAccount annotated for WIF:

IAM_SA_NAME with the IAM Service Account created in Step 3 below.

GCP Prerequisites

Step 1: Enable the Vertex AI API

Step 1: Enable the Vertex AI API

The Vertex AI API must be enabled in your project. Search for WIF uses the IAM Credentials API for token exchange. Enable it as well:

aiplatform in the API Library or run:Step 2: Enable Workload Identity on GKE

Step 2: Enable Workload Identity on GKE

Autopilot clusters: WIF is always enabled. Skip this step.Standard clusters: Enable the workload identity pool and GKE metadata server:Verify:

Step 3: Create an IAM Service Account and grant Vertex access

Step 3: Create an IAM Service Account and grant Vertex access

Create a dedicated IAM Service Account (or use an existing one) and grant it the Vertex AI User role:

Step 4: Bind the Kubernetes ServiceAccount to the IAM Service Account

Step 4: Bind the Kubernetes ServiceAccount to the IAM Service Account

Allow the Kubernetes ServiceAccount to impersonate the IAM Service Account:Replace If deploying with the Bifrost Helm chart, set the annotation via

NAMESPACE and KSA_NAME with your Bifrost pod’s namespace and Kubernetes ServiceAccount name.Then annotate the Kubernetes ServiceAccount so GKE knows which IAM identity to map:serviceAccount.annotations in your values file - see Helm - Google Vertex AI for the full example.Verify

From inside the Bifrost pod, confirm the GKE metadata server returns a token:NAMESPACE and POD_NAME with your Bifrost namespace and any running Bifrost pod name (e.g., bifrost-0 for a StatefulSet or use kubectl get pods -n NAMESPACE to find it).

A JSON response with an access_token field confirms WIF is working. Then send a request through Bifrost to a Vertex model (e.g., vertex/gemini-2.5-flash) to verify end-to-end.

Troubleshooting

| Symptom | Likely Cause | Fix |

|---|---|---|

"could not find default credentials" | GKE metadata server not enabled, or Kubernetes ServiceAccount missing WIF annotation | Enable GKE metadata server on the node pool (Step 2); verify the iam.gke.io/gcp-service-account annotation on the ServiceAccount (Step 4) |

403 Forbidden from Vertex API | IAM Service Account lacks Vertex permissions | Grant roles/aiplatform.user to the IAM Service Account |

403 during token exchange | WIF binding missing | Run the add-iam-policy-binding command from Step 4; confirm roles/iam.workloadIdentityUser is granted |

| Wrong project or region errors | Bifrost config mismatch | Check project_id and region in the Vertex key configuration |

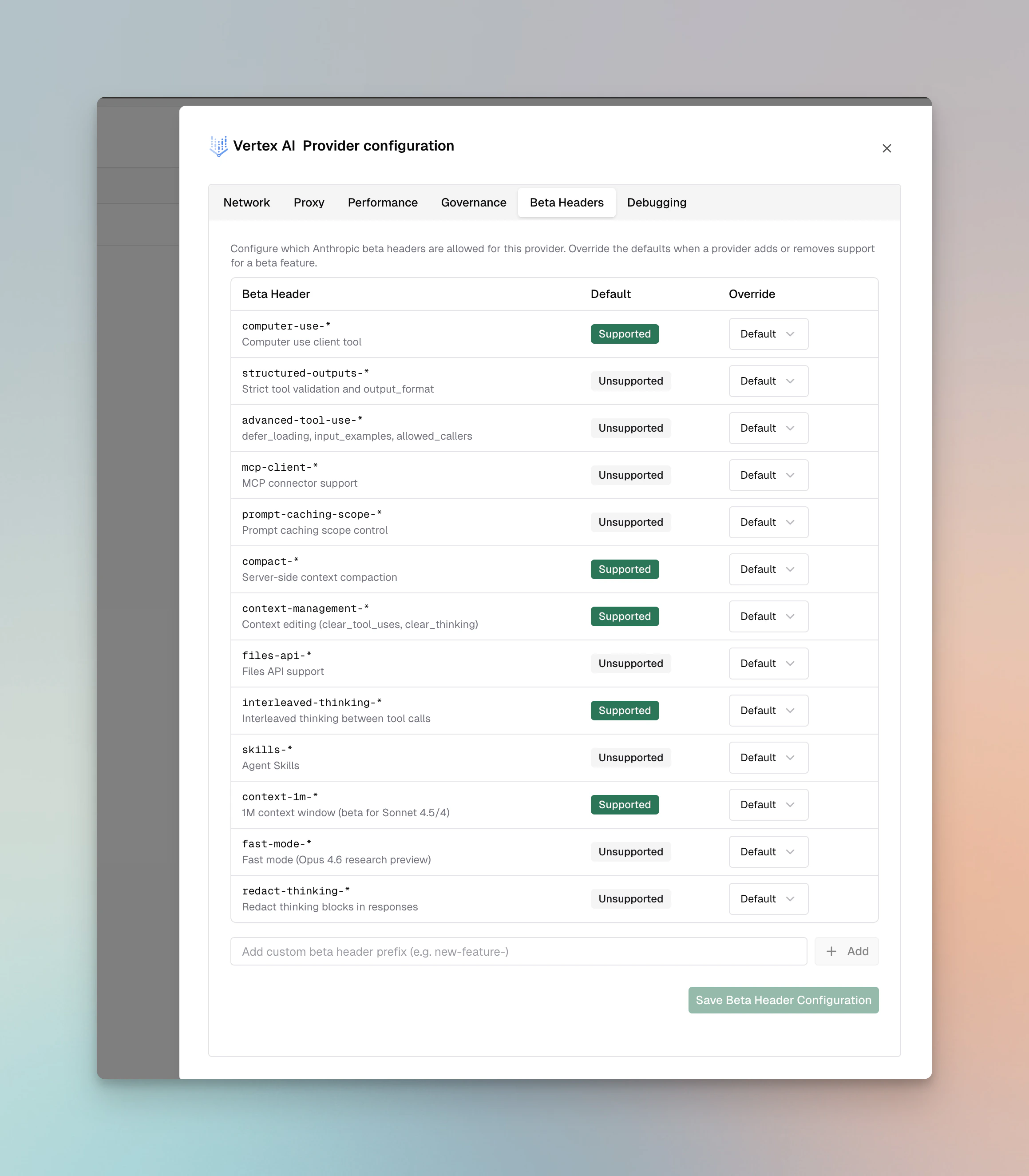

Beta Headers

For Anthropic models on Vertex AI, Bifrost validatesanthropic-beta headers and drops unsupported headers from the request.

Supported: computer-use-*, compact-*, context-management-*, interleaved-thinking-*, context-1m-*

Not supported: structured-outputs-*, advanced-tool-use-*, mcp-client-*, prompt-caching-scope-*, files-api-*, skills-*, fast-mode-*, redact-thinking-*

You can override these defaults per provider via the Beta Headers tab in provider configuration or via beta_header_overrides. See the full support matrix in the Anthropic provider docs.

1. Chat Completions

Request Parameters

Core Parameter Mapping

| Parameter | Vertex Handling | Notes |

|---|---|---|

model | Maps to Vertex model ID | Region-specific endpoint constructed automatically |

| All other params | Model-specific conversion | Converted per underlying provider (Gemini/Anthropic) |

Key Configuration

The key configuration for Vertex requires Google Cloud credentials:project_id- GCP project ID (required)region- GCP region for API endpoints (required)- Examples:

us-central1,us-west1,eu-west1,global

- Examples:

auth_credentials- Service account JSON credentials (optional if using default credentials)

Authentication Methods

-

Service Account JSON (recommended for production)

-

Application Default Credentials (for local development)

- Requires

GOOGLE_APPLICATION_CREDENTIALSenvironment variable - Leave

auth_credentialsempty

- Requires

Gemini Models

When using Google’s Gemini models, Bifrost converts requests to Gemini’s API format.Parameter Mapping for Gemini

All Gemini-compatible parameters are supported. Special handling includes:- System prompts: Converted to Gemini’s system message format

- Tool usage: Mapped to Gemini’s function calling format

- Streaming: Uses Gemini’s streaming protocol

Anthropic Models (Claude)

When using Anthropic models through Vertex AI, Bifrost converts requests to Anthropic’s message format.Parameter Mapping for Anthropic

All Anthropic-standard parameters are supported:- Reasoning/Thinking:

reasoningparameters converted tothinkingstructure - System messages: Extracted and placed in separate

systemfield - Tool message grouping: Consecutive tool messages merged

- API version: Automatically set to

vertex-2023-10-16for Anthropic models

Special Notes for Vertex + Anthropic

- Responses API uses special

/v1/messagesendpoint anthropic_versionautomatically set tovertex-2023-10-16- Minimum reasoning budget: 1024 tokens

- Model field removed from request (Vertex uses different identification)

Region Selection

The region determines the API endpoint:| Region | Endpoint | Purpose |

|---|---|---|

us-central1 | us-central1-aiplatform.googleapis.com | US Central |

us-west1 | us-west1-aiplatform.googleapis.com | US West |

eu-west1 | eu-west1-aiplatform.googleapis.com | Europe West |

global | aiplatform.googleapis.com | Global (no region prefix) |

Streaming

Streaming format depends on model type:- Gemini models: Standard Gemini streaming with server-sent events

- Anthropic models: Anthropic message streaming format

2. Responses API

The Responses API is available for both Anthropic (Claude) and Gemini models on Vertex AI.Request Parameters

Core Parameter Mapping

| Parameter | Vertex Handling | Notes |

|---|---|---|

instructions | Becomes system message | Model-specific conversion |

input | Converted to messages | String or array support |

max_output_tokens | Model-specific field mapping | Gemini vs Anthropic conversion |

| All other params | Model-specific conversion | Converted per underlying provider |

Gemini Models

For Gemini models, conversion follows Gemini’s Responses API format.Anthropic Models (Claude)

For Anthropic models, conversion follows Anthropic’s message format:instructionsbecomes system messagereasoningmapped tothinkingstructure

Configuration

- Gateway

- Go SDK

Special Handling

- Endpoint:

/v1/messages(Anthropic format) anthropic_versionset tovertex-2023-10-16automatically- Model and region fields removed from request

- Raw request body passthrough supported

3. Embeddings

Embeddings are supported for Gemini and other models that support embedding generation.Request Parameters

Core Parameters

| Parameter | Vertex Mapping | Notes |

|---|---|---|

input | instances[].content | Text to embed |

dimensions | parameters.outputDimensionality | Optional output size |

Advanced Parameters

Useextra_params for embedding-specific options:

- Gateway

- Go SDK

Embedding Parameters

| Parameter | Type | Description |

|---|---|---|

task_type | string | Task type hint: RETRIEVAL_QUERY, RETRIEVAL_DOCUMENT, SEMANTIC_SIMILARITY, CLASSIFICATION, CLUSTERING (optional) |

title | string | Optional title to help model produce better embeddings (used with task_type) |

autoTruncate | boolean | Auto-truncate input to max tokens (defaults to true) |

Task Type Effects

Different task types optimize embeddings for specific use cases:RETRIEVAL_DOCUMENT- Optimized for documents in retrieval systemsRETRIEVAL_QUERY- Optimized for queries searching documentsSEMANTIC_SIMILARITY- Optimized for semantic similarity tasksCLASSIFICATION- For classification tasksCLUSTERING- For clustering tasks

Response Conversion

Embeddings response includes vectors and truncation information:values- Embedding vector as floatsstatistics.token_count- Input token countstatistics.truncated- Whether input was truncated due to length

4. Image Generation

Image Generation is supported for Gemini and Imagen on Vertex AI. The provider automatically routes to the appropriate format based on the model type.Request Parameters

Core Parameter Mapping

| Parameter | Vertex Handling | Notes |

|---|---|---|

model | Mapped to deployment/model identifier | Model type detected automatically |

prompt | Model-specific conversion | Converted per underlying provider (Gemini/Imagen) |

| All other params | Model-specific conversion | Converted per underlying provider |

Model Type Detection

Vertex automatically detects the model type and uses the appropriate conversion:- Gemini Models: Uses Gemini format (same as Gemini Image Generation)

- Imagen Models: Uses Imagen format (detected via

IsImagenModel())

Configuration

- Gateway

- Go SDK

Request Conversion

Vertex converts requests based on model type:- Gemini Models: Uses

gemini.ToGeminiImageGenerationRequest()- same conversion as standard Gemini (see Gemini Image Generation) - Imagen Models: Uses

gemini.ToImagenImageGenerationRequest()- Imagen-specific format with size/aspect ratio conversion

map[string]interface{} and the region field is removed before sending to Vertex API.

Response Conversion

- Gemini Models: Responses converted using

GenerateContentResponse.ToBifrostImageGenerationResponse()- same as standard Gemini - Imagen Models: Responses converted using

GeminiImagenResponse.ToBifrostImageGenerationResponse()- Imagen-specific format

Endpoint Selection

The provider automatically selects the endpoint based on model type:- Fine-tuned models:

/v1beta1/projects/{projectNumber}/locations/{region}/endpoints/{deployment}:generateContent - Imagen models:

/v1/projects/{projectID}/locations/{region}/publishers/google/models/{model}:predict - Gemini models:

/v1/projects/{projectID}/locations/{region}/publishers/google/models/{model}:generateContent

Streaming

Image generation streaming is not supported by Vertex AI.5. Image Edit

Image Edit is supported for Gemini and Imagen models on Vertex AI. The provider automatically routes to the appropriate format based on the model type. Request Parameters| Parameter | Type | Required | Notes |

|---|---|---|---|

model | string | ✅ | Model identifier (must be Gemini or Imagen model) |

prompt | string | ✅ | Text description of the edit |

image[] | binary | ✅ | Image file(s) to edit (supports multiple images) |

mask | binary | ❌ | Mask image file |

type | string | ❌ | Edit type: "inpainting", "outpainting", "inpaint_removal", "bgswap" (Imagen only) |

n | int | ❌ | Number of images to generate (1-10) |

output_format | string | ❌ | Output format: "png", "webp", "jpeg" |

output_compression | int | ❌ | Compression level (0-100%) |

seed | int | ❌ | Seed for reproducibility (via ExtraParams["seed"]) |

negative_prompt | string | ❌ | Negative prompt (via ExtraParams["negativePrompt"]) |

maskMode | string | ❌ | Mask mode (via ExtraParams["maskMode"], Imagen only): "MASK_MODE_USER_PROVIDED", "MASK_MODE_BACKGROUND", "MASK_MODE_FOREGROUND", "MASK_MODE_SEMANTIC" |

dilation | float | ❌ | Mask dilation (via ExtraParams["dilation"], Imagen only): Range [0, 1] |

maskClasses | int[] | ❌ | Mask classes (via ExtraParams["maskClasses"], Imagen only): For MASK_MODE_SEMANTIC |

Request Conversion Vertex uses the same conversion functions as Gemini:

- Gemini Models: Uses

gemini.ToGeminiImageEditRequest()- same conversion as standard Gemini (see Gemini Image Edit) - Imagen Models: Uses

gemini.ToImagenImageEditRequest()- Imagen-specific format with edit mode mapping and mask configuration (see Gemini Image Edit)

ConfigurationError.

Request Body Processing:

- All request bodies are converted to

map[string]interface{}for Vertex API compatibility - The

regionfield is removed before sending to Vertex API - For Gemini models, unsupported fields are stripped via

stripVertexGeminiUnsupportedFields()(removesidfrom function_call and function_response)

- Gemini Models: Responses converted using

GenerateContentResponse.ToBifrostImageGenerationResponse()- same as standard Gemini - Imagen Models: Responses converted using

GeminiImagenResponse.ToBifrostImageGenerationResponse()- Imagen-specific format

- Gemini models:

/v1/projects/{projectID}/locations/{region}/publishers/google/models/{model}:generateContent - Imagen models:

/v1/projects/{projectID}/locations/{region}/publishers/google/models/{model}:predict

6. List Models

Request Parameters

None required. Automatically uses project_id and region from key config.Response Conversion

Lists models available in the specified project and region with metadata and deployment information:Custom vs Non-Custom Models

To provide a complete model listing experience, Bifrost performs multi-pass model discovery:Three-Pass Model Discovery

-

First Pass - Custom Models from API Response

- Queries Vertex AI’s List Models API

- Returns only custom fine-tuned models deployed to your project

- Custom models are identified by having deployment values that contain only digits

- Example:

"deployment": "1234567890"

-

Second Pass - Non-Custom Models from Aliases

- Adds standard foundation models from your

aliasesconfiguration - Non-custom models have alphanumeric deployment values (e.g.,

gemini-pro,claude-3-5-sonnet) - Filters by the key-level

modelsallowlist, if specified - Example:

"deployment": "gemini-2.0-flash"

- Adds standard foundation models from your

-

Third Pass - Allowed Models Not in Aliases

- Adds models specified in

modelsthat weren’t in thealiasesmap - Ensures all explicitly allowed models appear in the list

- Uses the model name itself as the deployment value

- Skips digit-only model IDs (reserved for custom models)

- Adds models specified in

Model Filtering Logic

- If

modelsis empty and no aliases are configured: No models are returned - If

modelsis empty but aliases are configured: Only aliased models are returned - If

modelsis["*"]: All models from all three passes are included (unrestricted) - If

modelsis non-empty: Only models/aliases whose request names appear inmodelsare included - Duplicate Prevention: Each model ID is tracked to prevent duplicates across passes

Model Name Formatting

Non-custom models from aliases and allowed models are automatically formatted for display:gemini-pro→ “Gemini Pro”claude-3-5-sonnet→ “Claude 3 5 Sonnet”gemini_2_flash→ “Gemini 2 Flash”

Example Configuration

- With Custom Models Only

- With Foundation Models

- With Allowed Models Filter

Pagination

Model listing is paginated automatically. If more than 100 models exist,next_page_token will be present. Bifrost handles pagination internally.

Caveats

Project ID and Region Required

Project ID and Region Required

Severity: High Behavior: Both project_id and region required for all

operations Impact: Request fails without valid GCP project/region

configuration Code:

vertex.go:127-138OAuth2 Token Management

OAuth2 Token Management

Severity: Medium Behavior: Tokens cached and automatically refreshed

when expired Impact: First request slightly slower due to auth; cached for

subsequent requests Code:

vertex.go:34-55Anthropic Model Detection

Anthropic Model Detection

Severity: Medium Behavior: Automatic detection of Anthropic vs Gemini

models Impact: Different conversion logic applied transparently Code:

vertex.go chat/responses endpointsModel-Specific Responses API Handling

Model-Specific Responses API Handling

Severity: Low Behavior: Responses API automatically routes to

Anthropic or Gemini implementation based on model Impact: Different

conversion logic applied transparently per model Code:

vertex.go:836-1080Anthropic Version Lock

Anthropic Version Lock

Severity: Low Behavior:

anthropic_version always set to

vertex-2023-10-16 for Claude Impact: Cannot override Anthropic version

for Claude on Vertex Code: utils.go:33, 71Embeddings Precision Preservation

Embeddings Precision Preservation

Severity: Low Behavior: Vertex returns float64 embeddings, and Bifrost

preserves that precision in normalized embedding responses Impact: No

precision loss in the

/v1/embeddings response path Code:

embedding.go:84-91List Models API Returns Only Custom Models

List Models API Returns Only Custom Models

Severity: High Behavior: Vertex AI’s List Models API only returns

custom fine-tuned models, NOT foundation models Impact: Bifrost performs

three-pass discovery to include foundation models from aliases and the

key-level

models allowlist Why: This is a Vertex AI API limitation -

foundation models must be explicitly configured Code: models.go:76-217Configuration

HTTP Settings: OAuth2 authentication with automatic token refresh | Region-specific endpoints | Max Connections 5000 | Max Idle 60 seconds Scope:https://www.googleapis.com/auth/cloud-platform

Endpoint Format: https://{region}-aiplatform.googleapis.com/v1/projects/{project}/locations/{region}/{resource}

Note: For global region, endpoint is https://aiplatform.googleapis.com/v1/projects/{project}/locations/global/{resource}

Video Generation

Vertex AI routes video generation through Gemini’s Veo models using thepredictLongRunning endpoint. All parameters are identical to Gemini Video Generation.

Only Veo models are supported (e.g.,

veo-2.0-generate-001). Passing a

non-Veo model name returns a configuration error.| Operation | Supported | Notes |

|---|---|---|

| Generate | ✅ | POST /v1/videos |

| Retrieve | ✅ | GET /v1/videos/{id} |

| Download | ✅ | GET /v1/videos/{id}/content |

| Delete | ❌ | Not supported |

| List | ❌ | Not supported |

| Remix | ❌ | Not supported |