Documentation Index

Fetch the complete documentation index at: https://docs.getbifrost.ai/llms.txt

Use this file to discover all available pages before exploring further.

Overview

Azure is a cloud provider offering access to OpenAI and Anthropic models through the Azure OpenAI Service. Bifrost performs conversions including:- Deployment mapping - Model identifiers mapped to Azure deployment IDs with version handling

- Authentication modes - API key, Entra ID (Service Principal), or Managed Identity (DefaultAzureCredential) with automatic environment detection

- Model routing - Automatic provider detection (OpenAI vs Anthropic) based on deployment

- API versioning - Configurable API versions with preview support for Responses API

- Custom endpoints - Full control over Azure endpoint configuration

- Multi-model support - Unified interface for OpenAI, Anthropic (via Azure), and Gemini models

- Request/response pass-through - Support for raw request/response bodies for advanced use cases

Supported Operations

| Operation | Non-Streaming | Streaming | Endpoint |

|---|---|---|---|

| Chat Completions | ✅ | ✅ | /openai/v1/chat/completions |

| Responses API | ✅ | ✅ | /openai/v1/responses |

| Embeddings | ✅ | - | /openai/v1/embeddings |

| Files | ✅ | - | /openai/v1/files |

| List Models | ✅ | - | /openai/v1/models |

| Image Generation | ✅ | ✅ | /openai/v1/images/generations |

| Image Edit | ✅ | ✅ | /openai/v1/images/edits |

| Video Generation | ✅ | - | /openai/v1/videos |

| Image Variation | ❌ | ❌ | - |

| Batch | ❌ | ❌ | - |

| Text Completions | ❌ | ❌ | - |

| Speech (TTS) | ❌ | ❌ | - |

Azure-specific: Batch operations and Text Completions are not supported by

Azure OpenAI Service. Responses API uses preview API version and is available

for both OpenAI and Anthropic models.

Setup & Configuration

Azure requires an endpoint URL, deployment mappings, and authentication configuration. Three authentication methods are supported.The

aliases field (mapping model names to Azure deployment IDs) requires

v1.5.0-prerelease2 or later. On v1.4.x, use deployments inside

azure_key_config instead - see the v1.5.0 Migration

Guide

for details.1. Default Credential (System Identity)

Leavevalue and all Entra ID fields empty. Bifrost calls azidentity.NewDefaultAzureCredential(nil), which tries credential sources in this order:

- Environment variables (

AZURE_CLIENT_ID+AZURE_CLIENT_SECRET+AZURE_TENANT_ID, or certificate/username variants) - Workload Identity (AKS with Workload Identity Federation)

- Managed Identity (Azure VMs, App Service, AKS, Container Instances)

- Azure CLI (

az login) - Azure Developer CLI (

azd auth login)

az login. No credentials need to be stored or rotated.

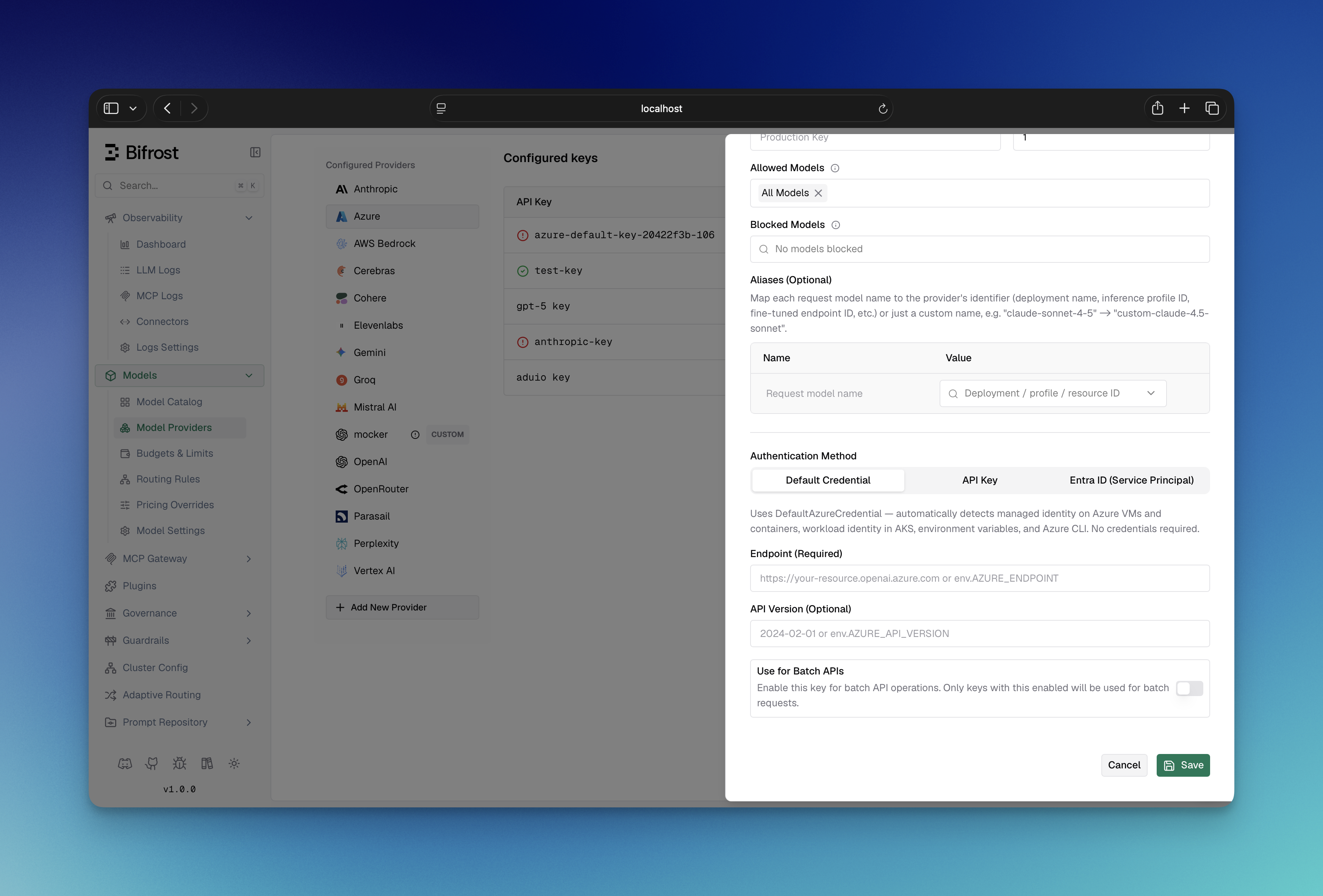

- Web UI

- API

- config.json

- Go SDK

- Navigate to “Model Providers” → “Configurations” → “Azure”

- Click “Add Key” (or edit an existing key)

- Under Authentication Method, select “Default Credential”

- Set Endpoint: Your Azure OpenAI resource URL (e.g.,

https://your-org.openai.azure.com) - Set API Version (Optional): e.g.,

2024-10-21 - Configure Aliases: Map model names to deployment IDs (e.g.,

gpt-4o→my-gpt4o-deployment) - Save

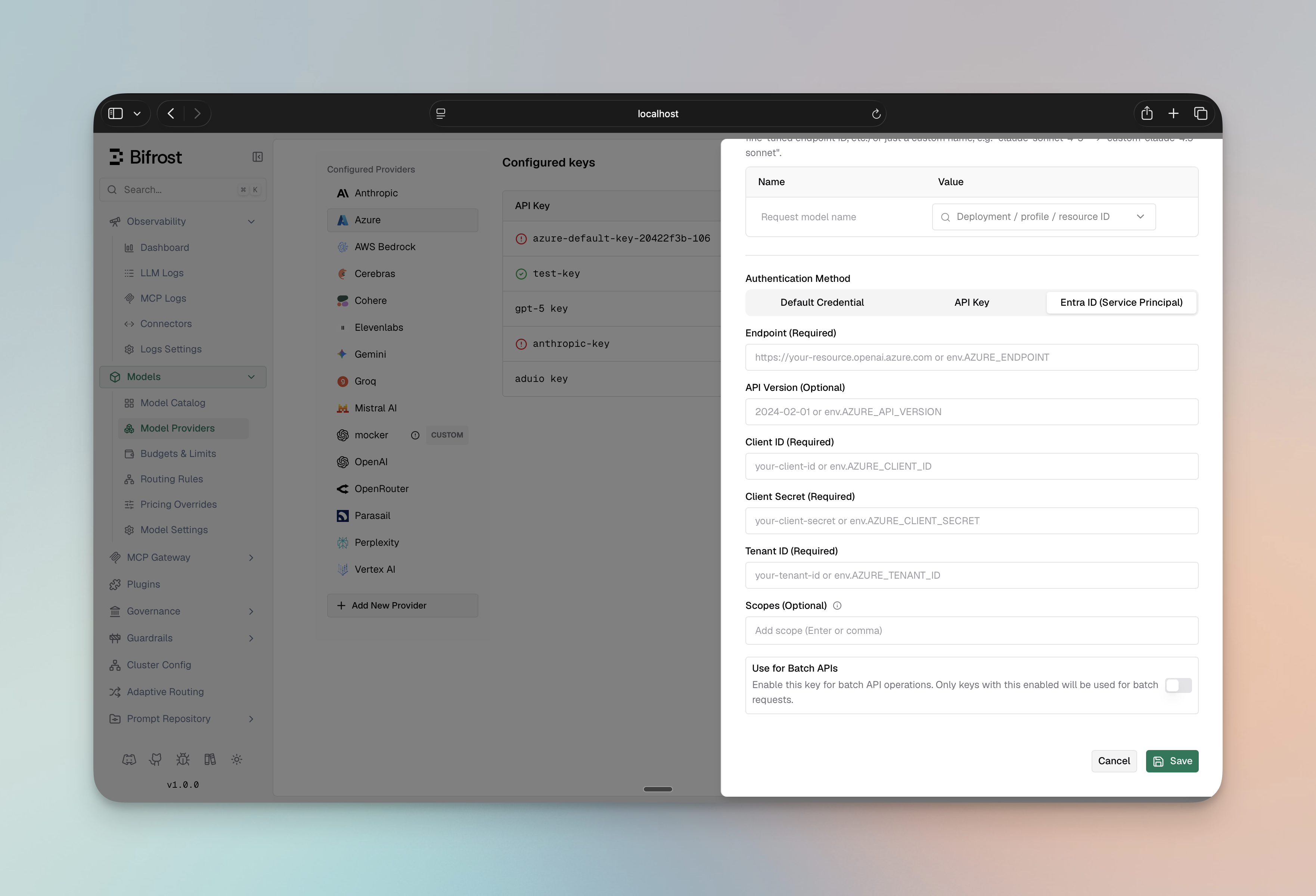

AZURE_CLIENT_ID/AZURE_CLIENT_SECRET/AZURE_TENANT_ID env vars, or az login for local development.2. Azure Entra ID (Service Principal)

Setclient_id, client_secret, and tenant_id to authenticate with a Service Principal. This takes priority over API key and managed identity.

- Web UI

- API

- config.json

- Go SDK

- Navigate to “Model Providers” → “Configurations” → “Azure”

- Click “Add Key” (or edit an existing key)

- Under Authentication Method, select “Entra ID (Service Principal)”

- Set Client ID: Your Azure Entra ID client ID

- Set Client Secret: Your Azure Entra ID client secret

- Set Tenant ID: Your Azure Entra ID tenant ID

- Set Endpoint: Your Azure OpenAI resource URL

- Set API Version (Optional): e.g.,

2024-08-01-preview - Set Scopes (Optional): Override the default OAuth scope (

https://cognitiveservices.azure.com/.default). Any configured scopes replace the default entirely - if you customize this field, you must include all required scopes (the default is not automatically added) - Configure Aliases: Map model names to deployment IDs

- Save

- OpenAI models:

Cognitive Services OpenAI User - Anthropic models:

Cognitive Services AI Services User

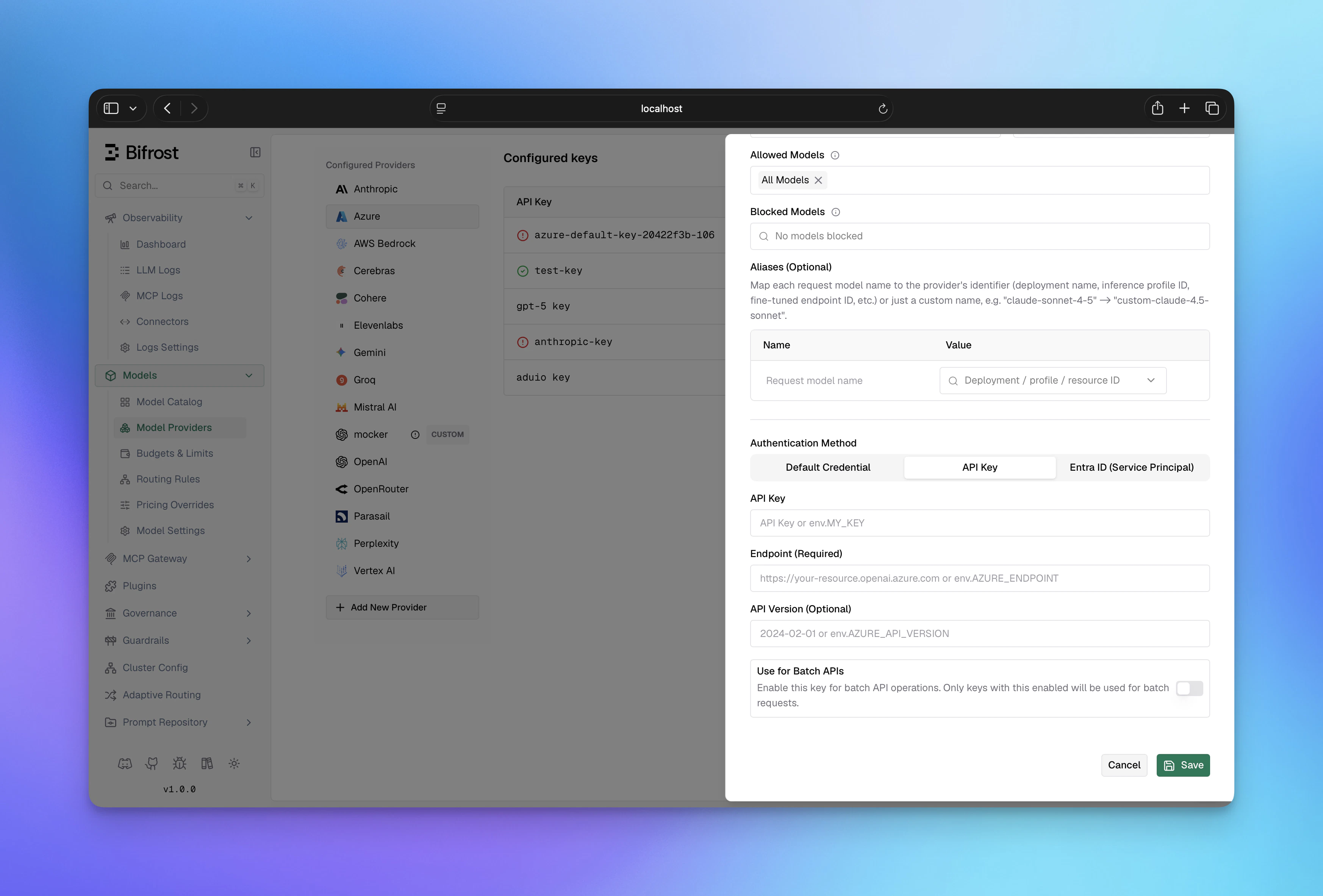

3. Direct Authentication (API Key)

Provide the Azure API key in thevalue field. Use this for simple setups without managed identity or Service Principal.

- Web UI

- API

- config.json

- Go SDK

- Navigate to “Model Providers” → “Configurations” → “Azure”

- Click “Add Key” (or edit an existing key)

- Under Authentication Method, select “API Key”

- Set API Key: Your Azure API key

- Set Endpoint: Your Azure OpenAI resource URL

- Set API Version (Optional): e.g.,

2024-10-21 - Configure Aliases: Map model names to deployment IDs

- Save

Authentication precedence: (1) Entra ID if

client_id, client_secret,

and tenant_id are all set; (2) API key if value is non-empty; (3)

DefaultAzureCredential (managed identity) if neither is provided.azure_key_config fields:

| Field | Required | Default | Description |

|---|---|---|---|

endpoint | Yes | - | Azure OpenAI resource endpoint URL |

api_version | No | 2024-10-21 | Azure API version |

client_id | No | - | Entra ID client ID (Service Principal auth) |

client_secret | No | - | Entra ID client secret (Service Principal auth) |

tenant_id | No | - | Entra ID tenant ID (Service Principal auth) |

scopes | No | ["https://cognitiveservices.azure.com/.default"] | OAuth scopes for token requests |

| Field | Required | Description |

|---|---|---|

aliases | No | Map model names to Azure deployment IDs (v1.5.0-prerelease2+) |

value | No | Azure API key (leave empty for Entra ID or managed identity) |

models | Yes | Models this key can serve; use ["*"] to allow all |

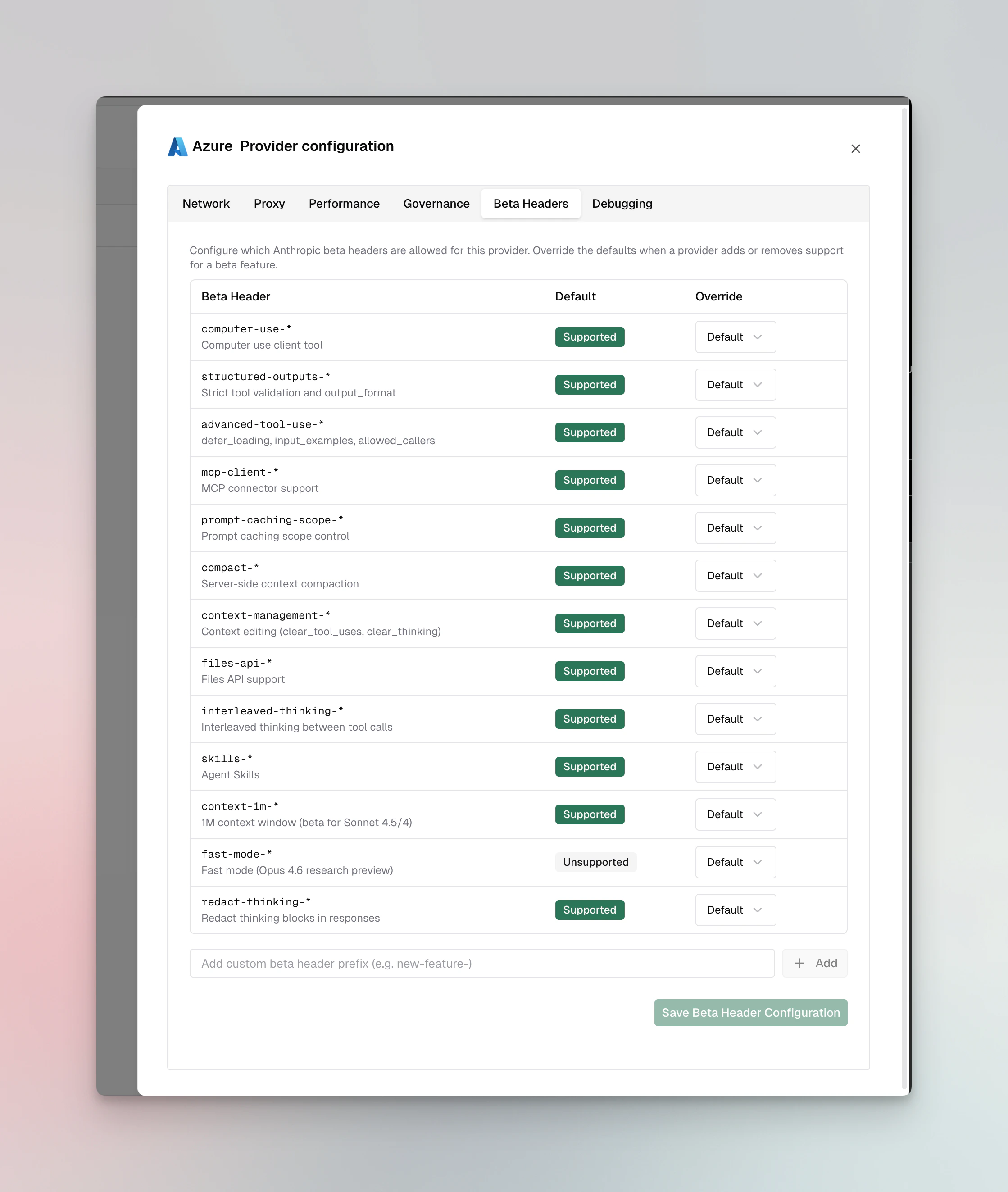

Beta Headers

For Anthropic models on Azure, Bifrost validatesanthropic-beta headers and drops unsupported headers from the request. Azure supports most Anthropic beta features.

Supported: computer-use-*, structured-outputs-*, advanced-tool-use-*, mcp-client-*, prompt-caching-scope-*, compact-*, context-management-*, files-api-*, interleaved-thinking-*, skills-*, context-1m-*, redact-thinking-*

Not supported: fast-mode-*

You can override these defaults per provider via the Beta Headers tab in provider configuration or via beta_header_overrides. See the full support matrix in the Anthropic provider docs.

1. Chat Completions

Request Parameters

Core Parameter Mapping

| Parameter | Azure Handling | Notes |

|---|---|---|

model | Mapped to deployment_id | Supports version matching and base model matching |

max_completion_tokens | Direct pass-through | OpenAI models only |

temperature, top_p | Direct pass-through | Same across all models |

| All other params | Model-specific conversion | Converted per underlying provider (OpenAI/Anthropic) |

Authentication Configuration

Azure uses custom endpoint and deployment configuration:- Gateway

- Go SDK

Key Configuration

Azure supports three authentication methods: Managed Identity (DefaultAzureCredential), Entra ID (Service Principal), and Direct (API Key). Precedence: Entra ID (if configured) → API key (ifvalue set) → DefaultAzureCredential.

Managed Identity / DefaultAzureCredential

If no API key and no Entra ID credentials are provided, Bifrost automatically usesDefaultAzureCredential, which

detects the auth environment.

Azure Entra ID (Service Principal)

If you setclient_id, client_secret, and tenant_id, Azure Entra ID authentication will be used with priority over API key authentication.

- For OpenAI models:

Cognitive Services OpenAI User - For Anthropic models:

Cognitive Services AI Services User

Direct Authentication (API Key)

endpoint- Azure OpenAI resource endpoint (required)client_id- Azure Entra ID client ID (optional, for Service Principal auth)client_secret- Azure Entra ID client secret (optional, for Service Principal auth)tenant_id- Azure Entra ID tenant ID (optional, for Service Principal auth)scopes- OAuth scopes for token requests (default:["https://cognitiveservices.azure.com/.default"])api_version- API version to use (default:2024-10-21)aliases- Map of model names to Azure deployment IDs (optional, set at key level)allowed_models- List of allowed models to use from this key (optional)

Deployment Selection

Deployments can be specified at three levels (in order of precedence):-

Per-request (highest priority)

-

Key configuration

- Model name (lowest priority, if no deployment specified) Model name is used as deployment ID directly

OpenAI Models

When using OpenAI models (GPT-4, GPT-4 Turbo, GPT-3.5-Turbo, etc.), Bifrost passes through OpenAI-compatible parameters directly.Parameter Mapping for OpenAI

All OpenAI-standard parameters are supported. Refer to OpenAI documentation for detailed conversion details.Anthropic Models

When using Anthropic models through Azure (Claude 3 family), Bifrost converts requests to Anthropic format.Parameter Mapping for Anthropic

All Anthropic-standard parameters are supported with special handling:- Reasoning/Thinking:

reasoningparameters converted to Anthropic’sthinkingstructure - System messages: Extracted and placed in separate

systemfield - Tool message grouping: Consecutive tool messages merged

Special Notes for Azure + Anthropic

- API version automatically set to

2023-06-01for Anthropic models - Endpoints use

/anthropic/v1/paths internally - Authentication uses

x-api-keyheader for Anthropic models - Minimum reasoning budget: 1024 tokens

API Versioning

- Default version:

2024-10-21(supports latest OpenAI features) - Preview version:

preview(used for Responses API) - Custom version: Set via

api_versionin key config

Streaming

Streaming uses OpenAI or Anthropic format depending on model type:- OpenAI models: Standard OpenAI streaming with

chat.completion.chunkevents - Anthropic models: Anthropic streaming format with content blocks

2. Responses API

The Responses API is available for both OpenAI and Anthropic models on Azure and uses the preview API version.Request Parameters

Core Parameter Mapping

| Parameter | Azure Handling | Notes |

|---|---|---|

instructions | Becomes system message | Model-specific conversion |

input | Converted to user message(s) | String or array support |

max_output_tokens | Model-specific field mapping | OpenAI vs Anthropic conversion |

| All other params | Model-specific conversion | Converted per underlying provider |

OpenAI Models

For OpenAI models (GPT-4, etc.), conversion follows OpenAI’s Responses API format.Anthropic Models

For Anthropic models (Claude, etc.), conversion follows Anthropic’s message format:instructionsbecomes system messagereasoningmapped tothinkingstructure

Endpoint Configuration

- Gateway

- Go SDK

Special Handling

- Uses

/openai/v1/responsesendpoint withpreviewAPI version - All request body conversions handled automatically

- Supports raw request body passthrough for advanced cases

3. Embeddings

Embeddings are supported for OpenAI models only (not available for Anthropic models on Azure).Request Parameters

| Parameter | Azure Handling |

|---|---|

input | Direct pass-through |

model | Mapped to deployment |

dimensions | Direct pass-through (when supported) |

- Gateway

- Go SDK

Response Conversion

Embeddings response is passed through directly from Azure OpenAI with standard format:4. Files API

Files operations are supported for OpenAI models only.Supported Operations

| Operation | Support |

|---|---|

| Upload | ✅ |

| List | ✅ |

| Retrieve | ✅ |

| Delete | ✅ |

| Get Content | ✅ |

5. Image Generation

Image Generation is supported for OpenAI models on Azure and uses the OpenAI-compatible format.Request Parameters

Core Parameter Mapping

| Parameter | Azure Handling | Notes |

|---|---|---|

model | Mapped to deployment_id | Deployment ID must be configured |

prompt | Direct pass-through | Prompt text for image generation |

| All other params | Direct pass-through | Uses OpenAI format |

- Model & Prompt:

bifrostReq.Model→req.Model(mapped to deployment),bifrostReq.Prompt→req.Prompt - Parameters: All other fields from

bifrostReqare embedded directly into the request struct via struct embedding

Configuration

- Gateway

- Go SDK

Response Conversion

- Non-streaming: Azure responses are unmarshaled directly into

BifrostImageGenerationResponsesince Bifrost’s response schema is a superset of OpenAI’s format. All fields are passed through as-is. - Streaming: Azure streaming responses use Server-Sent Events (SSE) format with the same event types as OpenAI (see OpenAI Image Generation Streaming).

Streaming

Image generation streaming is supported and uses OpenAI’s streaming format with Server-Sent Events (SSE).6. Image Edit

Image Edit is supported for OpenAI models on Azure and uses the OpenAI-compatible format. Azure uses the same conversion as OpenAI (see OpenAI Image Edit):- Request Conversion: Uses

openai.HandleOpenAIImageEditRequestwith Azure-specific URL construction - URL Format:

{endpoint}/openai/deployments/{deployment}/images/edits?api-version={apiVersion} - Authentication: Azure API key or OAuth bearer token (via

getAzureAuthHeaders) - Deployment Mapping: Model identifier mapped to Azure deployment ID

- Response Conversion: Same as OpenAI - responses unmarshaled directly into

BifrostImageGenerationResponse - Streaming: Supported via

openai.HandleOpenAIImageEditStreamRequestwith Azure-specific URL and authentication

/openai/deployments/{deployment}/images/edits?api-version={apiVersion}

7. List Models

Request Parameters

None required.Response Conversion

Lists available models/deployments configured in the Azure key. Response includes model metadata, capabilities, and lifecycle status.Caveats

Deployment ID Required

Deployment ID Required

Severity: High Behavior: Model names must map to Azure deployment IDs

Impact: Request fails without valid deployment mapping Code:

azure.go:145-200Model Provider Detection

Model Provider Detection

Severity: Medium Behavior: Automatic detection of OpenAI vs Anthropic

based on model name Impact: Different conversion logic applied

transparently Code:

azure.go:92-114Responses API Preview Version (including Anthropic)

Responses API Preview Version (including Anthropic)

Severity: Medium Behavior: Responses API automatically uses preview

API version, which differs from Chat Completions API version. For Anthropic

models, Responses API specifically uses

preview API version. Impact:

Different API version for Responses vs Chat Completions. Automatic version

override for Responses requests. Code: azure.go:92-114,

azure.go:109-113, azure.go:694Version Matching for Deployments

Version Matching for Deployments

Severity: Low Behavior: Model version differences ignored when

matching to deployments Impact:

gpt-4 and gpt-4-turbo can map to same

deployment Code: models.go:13-588. Video Generation

Azure routes video generation to OpenAI’s Sora models via the Azure OpenAI-compatible endpoint. All parameters are identical to OpenAI Video Generation. Supported Operations| Operation | Supported | Notes |

|---|---|---|

| Generate | ✅ | POST /v1/videos |

| Retrieve | ✅ | GET /v1/videos/{id} |

| Download | ✅ | GET /v1/videos/{id}/content |

| Delete | ✅ | DELETE /v1/videos/{id} |

| List | ✅ | GET /v1/videos |

| Remix | ❌ | Not supported |

Configuration

HTTP Settings: API Version2024-10-21 (configurable) | Max Connections 5000 | Max Idle 60 seconds

Endpoint Format: https://{resource-name}.openai.azure.com/openai/v1/{path}?api-version={version}

Note: Bifrost automatically constructs URLs using the endpoint from key configuration and the configured API version.