Documentation Index

Fetch the complete documentation index at: https://docs.getbifrost.ai/llms.txt

Use this file to discover all available pages before exploring further.

Overview

Bifrost offers two powerful methods for routing requests across AI providers, each serving different use cases:- Governance-based Routing: Explicit, user-defined routing rules configured via Virtual Keys

- Adaptive Load Balancing: Automatic, performance-based routing powered by real-time metrics (Enterprise feature)

When to use which method:

- Use Governance when you need explicit control, compliance requirements, or specific cost optimization strategies

- Use Adaptive Load Balancing for automatic performance optimization and minimal configuration overhead

The Model Catalog

The Model Catalog is Bifrost’s central registry that tracks which models are available from which providers. It powers both governance-based routing and adaptive load balancing by maintaining an up-to-date mapping of models to providers.Architecture Documentation: For detailed technical documentation on the

Model Catalog implementation, including API reference, thread safety, and

advanced usage patterns, see Model Catalog

Architecture.

Data Sources

The Model Catalog combines two data sources to maintain a comprehensive and up-to-date model registry:-

Pricing Data (Primary source)

- Downloaded from a remote URL (configurable, defaults to

https://getbifrost.ai/datasheet) - Contains model names, pricing tiers, and provider mappings

- Synced to database on startup and refreshed periodically (default: every 24 hours)

- Used for cost calculation and initial model-to-provider mapping

- Stored as: In-memory map

pricingData[model|provider|mode]for O(1) lookups

- Downloaded from a remote URL (configurable, defaults to

-

Provider List Models API (Secondary source)

- Calls each provider’s

/v1/modelsendpoint during startup - Enriches the catalog with provider-specific models and aliases

- Re-fetched when providers are added/updated via API or dashboard

- Adds models that may not be in pricing data yet (e.g., newly released models)

- Stored as: In-memory map

modelPool[provider][]models

- Calls each provider’s

Why two sources? Pricing data provides comprehensive model coverage with

cost information, while the List Models API ensures you can use newly released

models immediately without waiting for pricing data updates.

How Model Availability is Determined

Bifrost uses a sophisticated multi-step process to determine if a model is available for a provider:GetModelsForProvider(provider)

GetModelsForProvider(provider)

Purpose: Find all models available for a specific providerLookup Process:Used by:

- Check

modelPool[provider]for direct matches - Return all models in that provider’s slice

- Routing Methods to validate

allowed_models - Dashboard model selector dropdowns

- API responses for

/v1/models?provider=openai

GetProvidersForModel(model)

GetProvidersForModel(model)

Purpose: Find all providers that support a specific modelLookup Process:Used by:

- Direct lookup: Check each provider’s model list in

modelPool - Cross-provider resolution: Apply special handling for proxy providers

Example:

- Load balancing to find candidate providers

- Fallback generation

- Model validation in requests

Pricing Lookup with Fallbacks

Pricing Lookup with Fallbacks

Purpose: Get pricing data for cost calculation and model validationLookup Key: Used by:

model|provider|mode (e.g., gpt-4o|openai|chat)Fallback Chain:- Primary lookup:

model|provider|requestType - Gemini → Vertex: If Gemini not found, try Vertex with same model

- Vertex format stripping: For

provider/model, strip prefix and retry - Bedrock prefix handling: For Claude models, try with

anthropic.prefix - Responses → Chat: If Responses mode not found, try Chat mode

- Cost calculation for billing

- Model validation during routing

- Budget enforcement

Syncing Behavior

Initial Sync (Startup)

Initial Sync (Startup)

When Bifrost starts, it performs a complete model catalog initialization:Step-by-step process (from

server.go:Bootstrap()):Result: Bifrost is ready with a comprehensive model catalog combining both sources.

Ongoing Sync (Background)

Ongoing Sync (Background)

While Bifrost is running, the catalog stays up-to-date through background workers:Pricing Data Sync:

- Background worker runs every 1 hour (ticker interval)

- Checks if 24 hours have elapsed since last sync (configurable)

- If yes, downloads fresh pricing data and updates database + memory cache

- Timer resets after successful sync

-

Provider Added: When a new provider is configured

-

Provider Updated: When provider config changes (keys, endpoints, etc.)

-

Manual Refresh: Via API endpoint

-

Manual Delete + Refetch: Clear and reload models

- Pricing URL fails but database has data → Use cached database records

- Pricing URL fails and no database data → Error logged, existing memory cache retained

- List models API fails → Log warning, retain existing model pool entries

Fallback Strategy

Fallback Strategy

Bifrost’s multi-layered approach ensures high availability:Layer 1: Pricing Data PersistenceLayer 2: Model Pool RedundancyLayer 3: Runtime ValidationExample Scenario:This design ensures requests never fail due to sync issues as long as one data source is available.

Allowed Models Behavior with Examples

Theallowed_models field in provider configs controls which models can be used with that provider. Understanding its behavior is crucial for governance routing.

- Wildcard allowed_models (Use Catalog)

- Explicit allowed_models (Strict Control)

- Aliases (Key-Level)

- Cross-Provider Model Routing

Configuration:Behavior:Use Cases:

- Bifrost calls

GetModelsForProvider("openai") - Returns all models in

modelPool["openai"] - Request validated against catalog

- Default behavior for most deployments

- Automatically stays up-to-date with provider’s model offerings

- No manual model list maintenance required

How It’s Used in Routing

- Governance Routing

- Load Balancing

When a Virtual Key has Request Flow:Rejection Example:

provider_configs, governance uses the model catalog for validation:Wildcard allowed_models Example:Model Catalog is essential for cross-provider routing. Without it, Bifrost

wouldn’t know that

gpt-4o is available from OpenAI, Azure, and Groq, or that

claude-3-5-sonnet can be routed through Anthropic, Vertex, Bedrock, and

OpenRouter. This knowledge powers both governance validation and load

balancing provider discovery.Default Provider Resolution

Default provider resolution via model catalog is available in Bifrost

v1.5.0-prerelease7 and above.

provider/ prefix (e.g., "model": "gpt-4o" instead of "model": "openai/gpt-4o"), Bifrost automatically resolves the provider using the Model Catalog. Note that this default behavior is applied after all other routing engines have run.

How It Works

- Request arrives without a provider prefix (e.g.,

"model": "gpt-4o") - Catalog lookup: Bifrost calls

GetProvidersForModel("gpt-4o")to find all providers that support the model - Provider selected: A provider from the catalog’s available list is used (e.g.,

openai) - Request continues: The resolved

provider/modelstring is used for load balancing and fallback handling

model-catalog routing engine in telemetry and routing logs, with a message like:

Example

If the model catalog is not available or the model is not found in any

provider, the request returns an error asking for the

provider/model format.

For deterministic provider selection, always use the explicit provider/model

prefix.Governance-based Routing

Governance-based routing allows you to explicitly define which providers and models should handle requests for a specific Virtual Key. This method provides precise control over routing decisions.How It Works

When a Virtual Key hasprovider_configs defined:

- Request arrives with a Virtual Key (e.g.,

x-bf-vk: vk-prod-main) - Model validation: Bifrost checks if the requested model is allowed for any configured provider

- Provider filtering: Providers are filtered based on:

- Model availability in

allowed_models - Budget limits (current usage vs max limit)

- Rate limits (tokens/requests per time window)

- Model availability in

- Weighted selection: A provider is selected using weighted random distribution

- Provider prefix added: Model string becomes

provider/model(e.g.,openai/gpt-4o) - Fallbacks created: Remaining providers sorted by weight (descending) are added as fallbacks

Configuration Example

Request Flow

Governance Evaluation

- OpenAI: ✅ Has

gpt-4oin allowed_models, budget OK, weight 0.3 - Azure: ✅ Has

gpt-4oin allowed_models, rate limit OK, weight 0.7

Key Features

| Feature | Description |

|---|---|

| Explicit Control | Define exactly which providers and models are accessible |

| Budget Enforcement | Automatically exclude providers exceeding budget limits |

| Rate Limit Protection | Skip providers that have hit rate limits |

| Weighted Distribution | Control traffic distribution with custom weights |

| Automatic Fallbacks | Failed providers automatically retry with next highest weight |

Best Practices

Cost Optimization

Cost Optimization

Assign higher weights to cheaper providers for cost-sensitive workloads:

Environment Separation

Environment Separation

Create different Virtual Keys for dev/staging/prod with different provider access:

Compliance & Data Residency

Compliance & Data Residency

Restrict specific Virtual Keys to compliant providers:

allowed_models: ["*"]: Allows all models supported by the provider, validated via the Model Catalog (populated from pricing data and the provider’s list models API). See the Model Catalog section above for how syncing works. For configuration instructions, see Governance Routing.allowed_models: [] (empty array): Denies all models - no requests will be served for this provider config. This is deny-by-default behavior introduced in v1.5.0.Empty provider_configs: When provider_configs is empty (no providers configured), all providers are blocked (deny-by-default). You must explicitly add provider configurations to allow traffic through a Virtual Key.Adaptive Load Balancing

Enterprise Feature: Adaptive Load Balancing is available in Bifrost

Enterprise. Contact us to enable

it.

Two-Level Architecture

Why Two Levels?

Separating provider selection (direction) from key selection (route) enables:

- Provider-level optimization: Choose the best provider for a model based on aggregate performance

- Key-level optimization: Within that provider, choose the best API key based on individual key performance

- Resilience: Even when provider is specified (by governance or user), key-level load balancing still optimizes which API key to use

Level 1: Direction (Provider Selection)

When it runs: Only when the model string has no provider prefix (e.g.,gpt-4o)

How it works:

- Model catalog lookup: Find all configured providers that support the requested model

- Provider filtering: Filter based on:

- Allowed models from keys configuration

- Keys availability for the provider

- Performance scoring: Calculate scores for each provider based on:

- Error rates (50% weight)

- Latency (20% weight, using MV-TACOS algorithm)

- Utilization (5% weight)

- Momentum bias (recovery acceleration)

- Smart selection: Choose provider using weighted random with jitter and exploration

- Fallbacks created: Remaining providers sorted by performance score (descending) are added as fallbacks

Level 2: Route (Key Selection)

When it runs: Always, even when provider is already specified (by governance, user, or Level 1) How it works:- Get available keys: Fetch all keys for the selected provider

- Filter by configuration: Apply model restrictions from key configuration

- Performance scoring: Calculate score for each key based on:

- Error rates (recent failures)

- Latency (response time)

- TPM hits (rate limit violations)

- Current state (Healthy, Degraded, Failed, Recovering)

- Weighted random selection: Choose key with exploration (25% chance to probe recovering keys)

- Circuit breaker: Skip keys with zero weight (TPM hits, repeated failures)

Scoring Algorithm

The load balancer computes a performance score for each provider-model combination:Request Flow

Performance Evaluation

- OpenAI: Score 0.92 (low latency, 99% success rate)

- Azure: Score 0.85 (medium latency, 98% success rate)

- Groq: Score 0.65 (high latency recently)

Key Features

| Feature | Description |

|---|---|

| Automatic Optimization | No manual weight tuning required |

| Real-time Adaptation | Weights recomputed every 5 seconds based on live metrics |

| Circuit Breakers | Failing routes automatically removed from rotation |

| Fast Recovery | 90% penalty reduction in 30 seconds after issues resolve |

| Health States | Routes transition between Healthy, Degraded, Failed, and Recovering |

| Smart Exploration | 25% chance to probe potentially recovered routes |

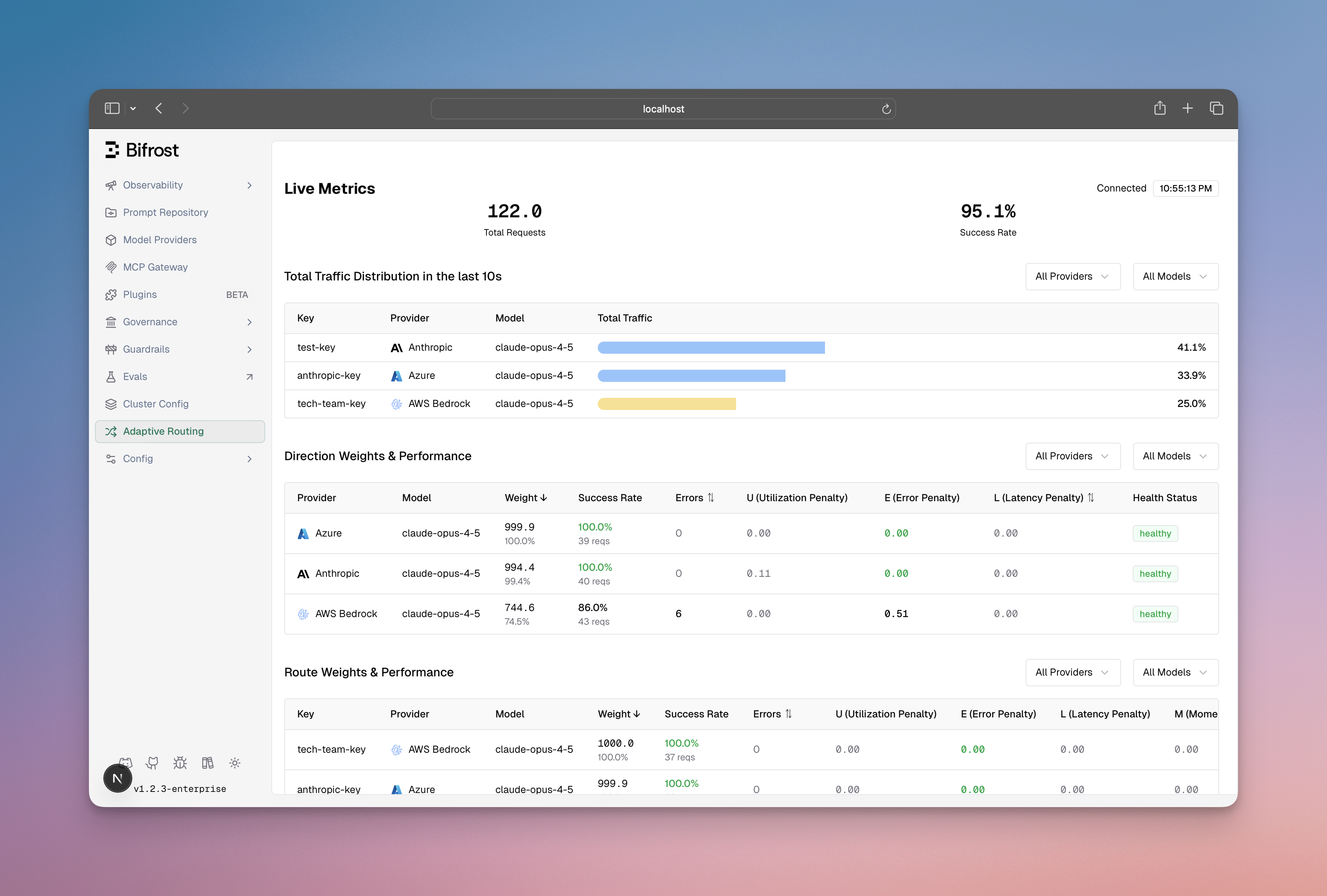

Dashboard Visibility

Monitor load balancing performance in real-time:

- Weight distribution across provider-model-key routes

- Performance metrics (error rates, latency, success rates)

- State transitions (Healthy → Degraded → Failed → Recovering)

- Actual vs expected traffic distribution

How Governance and Load Balancing Interact

When both methods are available in your Bifrost deployment, they work together in a complementary way across two levels.Execution Flow

Execution Order

-

HTTPTransportIntercept (Governance Plugin - Provider Level)

- Runs first in the request pipeline

- Checks if Virtual Key has

provider_configs - If yes: adds provider prefix (e.g.,

azure/gpt-4o) - Result: Provider is selected by governance rules

-

Middleware (Load Balancing Plugin - Provider Level / Direction)

- Runs after HTTPTransportIntercept

- Checks if model string contains ”/”

- If yes: skips provider selection (already determined by governance or user)

- If no: performs performance-based provider selection

- Result: Provider prefix added if not already present

-

KeySelector (Load Balancing - Key Level / Route)

- Always runs during request execution in Bifrost core

- Gets all keys for the selected provider

- Filters keys based on model restrictions

- Scores each key by performance metrics

- Selects best key using weighted random + exploration

- Result: Optimal key selected within the provider

Important: Even when governance specifies

azure/gpt-4o, load balancing

still optimizes which Azure key to use based on performance metrics. This

is the power of the two-level architecture!Example Scenarios

- Governance Only

- Load Balancing Only

- Both Available (Governance + Load Balancing)

- Manual Provider Selection

Setup:Behavior:

- Virtual Key has

provider_configsdefined - No adaptive load balancing enabled

- Governance applies weighted provider routing → selects Azure (70% weight)

- Model becomes

azure/gpt-4o - Standard key selection (non-adaptive) chooses an Azure key based on static weights

- Request forwarded to Azure with selected key

Provider vs Key Selection Rules

| Scenario | Provider Selection | Key Selection |

|---|---|---|

| VK with provider_configs | Governance (weighted random) | Standard or Adaptive (if enabled) |

| VK without provider_configs + LB | Blocked (empty = no providers allowed) | N/A |

| No VK + LB | Load Balancing Level 1 (performance) | Load Balancing Level 2 (performance) |

| Model with provider prefix + LB | Skip (already specified) | Load Balancing Level 2 (performance) ✅ |

| No Load Balancing enabled | Governance or User or Model Catalog | Standard (static weights) |

Critical Insight:

- Provider selection respects the hierarchy: Governance → Load Balancing Level 1 → User specification

- Key selection runs independently and benefits from load balancing even when provider is predetermined

Routing Rules (Dynamic Expression-Based Routing)

Position in routing pipeline: Routing Rules execute before governance

provider selection and can override it. They are evaluated before adaptive

load balancing, enabling dynamic provider/model overrides based on runtime

conditions like headers, parameters, capacity metrics, and organizational

hierarchy.

Overview

Routing Rules provide sophisticated, expression-based control over request routing using CEL expressions. Unlike governance routing (static weights), routing rules evaluate conditions dynamically at request time.When Routing Rules Execute

How It Works

- Routing rules evaluate first in scope precedence order (VirtualKey → Team → Customer → Global)

- If a routing rule matches: provider/model/fallbacks are overridden, governance provider_configs are skipped

- If no routing rule matches: governance provider selection runs (weighted random)

- Load balancing Level 1: skipped if provider already determined (has ”/” prefix)

- Load balancing Level 2 (key selection): always runs to select the best key within the determined provider

Available CEL Variables

Routing rules access request context through CEL variables:Examples

Route based on user tier

Route to fallback when budget high

Route by team

Complex multi-condition routing

Scope Hierarchy

Rules are evaluated in organizational precedence order (first-match-wins):Key Features

| Feature | Description |

|---|---|

| CEL Expressions | Powerful, composable condition language with multiple operators |

| Scope Hierarchy | Rules at VirtualKey/Team/Customer/Global levels with proper precedence |

| Dynamic Override | Override provider and/or model based on runtime conditions |

| Fallback Chains | Define multiple fallback providers for automatic failover |

| Priority Ordering | Lower priority evaluated first within same scope |

| Capacity Awareness | Access real-time budget and rate limit usage percentages |

Integration with Governance

Routing Rules execute before governance provider selection and can override it: If a routing rule matches:Integration with Load Balancing

Routing Rules work before load balancing:Use Cases

- Tier-based routing: Premium users → fast providers

- Capacity failover: High budget usage → cheaper providers

- Team preferences: Different teams → different providers

- A/B testing: Route subset of traffic to test models

- Regional routing: EU users → EU providers (data residency)

- Complex logic: Combine multiple conditions for sophisticated routing

Dashboard & API

Routing rules can be configured through:- Dashboard: Visual rule builder with CEL expression editor

- API:

POST /api/governance/routing-rulesand related endpoints - Scope: Create rules at global, customer, team, or virtual key levels

- Priority: Order rules within scope with numeric priority

Choosing the Right Approach

- Use Governance When: ✅ Compliance requirements: Need to ensure data stays in specific regions or providers ✅ Cost optimization: Want explicit control over traffic distribution to cheaper providers ✅ Budget enforcement: Need hard limits on spending per provider ✅ Environment separation: Different teams/apps need different provider access ✅ Rate limit management: Need to respect provider-specific rate limits

- Use Routing Rules When: ✅ Dynamic routing: Route based on runtime request context (headers, parameters) ✅ Capacity-aware routing: Switch to fallback when budget/rate limits high ✅ Organization-based routing: Different rules for teams/customers ✅ A/B testing: Route subset of traffic to test new models ✅ Complex conditions: Multiple criteria (e.g., tier + capacity + team)

- Use Load Balancing When: ✅ Performance optimization: Want automatic routing to best-performing providers ✅ Minimal configuration: Prefer hands-off operation with intelligent defaults ✅ Dynamic workloads: Traffic patterns change frequently ✅ Automatic failover: Need instant adaptation to provider issues ✅ Multi-provider redundancy: Want seamless provider switching based on availability

- Use All Three Together: ✅ Complete solution: Governance provides base routing, routing rules add dynamic override, load balancing optimizes keys ✅ Maximum flexibility: Different Virtual Keys use different strategies (governance vs routing rules vs load balancing) ✅ Enterprise deployments: Complex organizations with multiple requirements per layer

Additional Resources

Governance Routing

Configuration instructions for setting up governance routing via Virtual

Keys (Web UI, API, config.json)

Routing Rules

Dynamic, expression-based routing using CEL expressions for runtime

conditions

Adaptive Load Balancing

Technical implementation details: scoring algorithms, weight calculations,

and performance characteristics

Virtual Keys

Learn how to create and configure Virtual Keys

Fallbacks

Understand how automatic fallbacks work across providers