Documentation Index Fetch the complete documentation index at: https://docs.getbifrost.ai/llms.txt

Use this file to discover all available pages before exploring further.

Overview Guardrails in Bifrost provide enterprise-grade content safety, security validation, and policy enforcement for LLM requests and responses. The system validates inputs and outputs in real-time against your specified policies, ensuring responsible AI deployment with comprehensive protection against harmful content, prompt injection, PII leakage, and policy violations.Supported Providers

AWS Bedrock Guardrails Enterprise content filtering, PII detection, and prompt attack prevention.

Azure Content Safety Multi-modal content moderation with severity-based filtering.

GraySwan Cygnal AI safety monitoring with natural language rule definitions.

Patronus AI LLM security, hallucination detection, and safety evaluation.

Core Concepts Bifrost Guardrails are built around two core concepts that work together to provide flexible and powerful content protection:

Concept Description Rules Custom policies defined using CEL (Common Expression Language) that determine what content to validate and when. Rules can apply to inputs, outputs, or both, and can be linked to one or more profiles for evaluation. Profiles Configurations for external guardrail providers (AWS Bedrock, Azure Content Safety, GraySwan, Patronus AI). Profiles are reusable and can be shared across multiple rules.

How They Work Together:

Profiles define how content is evaluated using external provider capabilitiesRules define when and what content gets evaluated using CEL expressionsA single rule can use multiple profiles for layered protection

Profiles can be reused across different rules for consistency

Key Features Feature Description Multi-Provider Support AWS Bedrock, Azure Content Safety, GraySwan, and Patronus AI integration Dual-Stage Validation Guard both inputs (prompts) and outputs (responses) Real-Time Processing Synchronous and asynchronous validation modes CEL-Based Rules Define custom policies using Common Expression Language Reusable Profiles Configure providers once, use across multiple rules Sampling Control Apply rules to a percentage of requests for performance tuning Automatic Remediation Block, redact, or modify content based on policy Comprehensive Logging Detailed audit trails for compliance

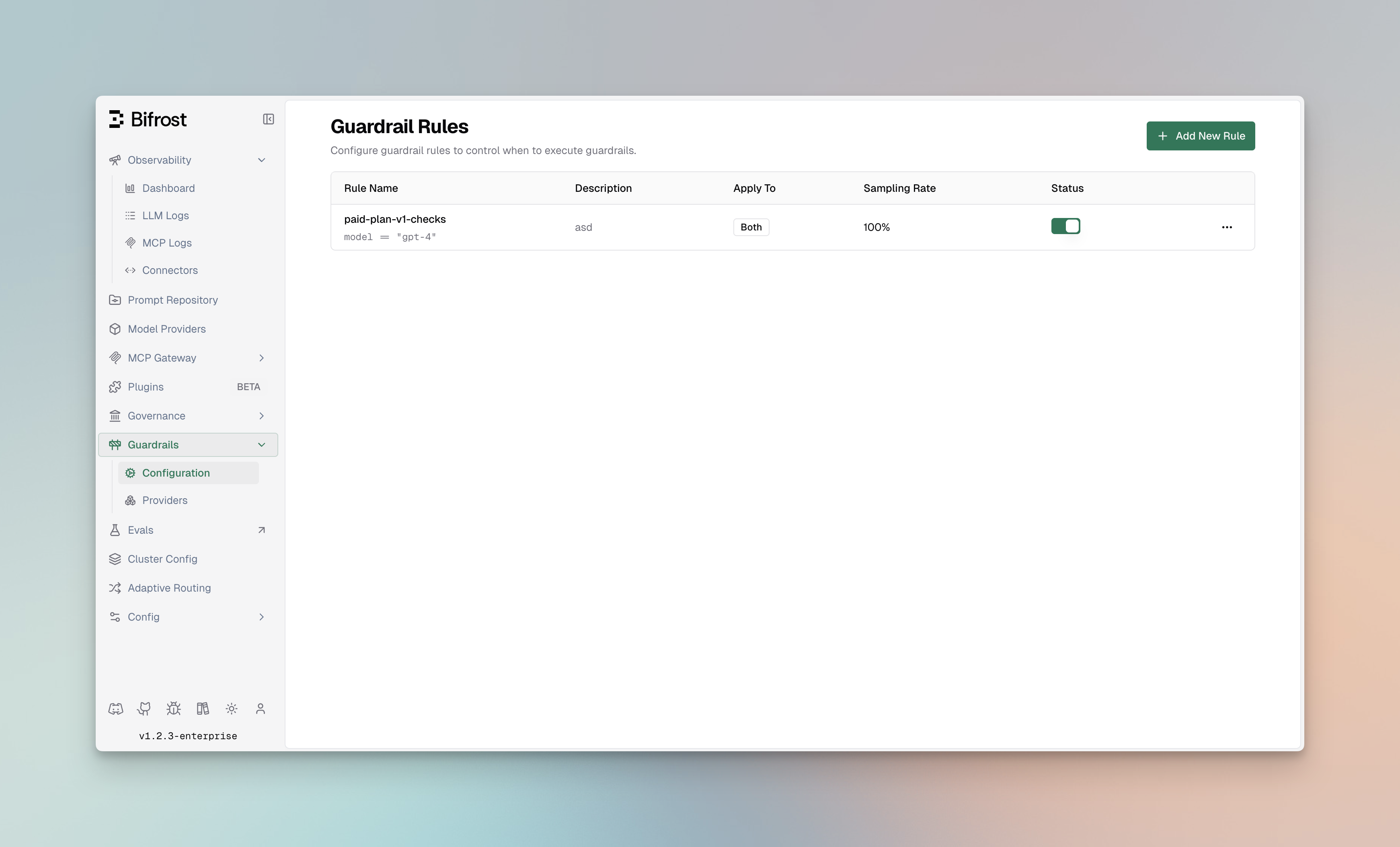

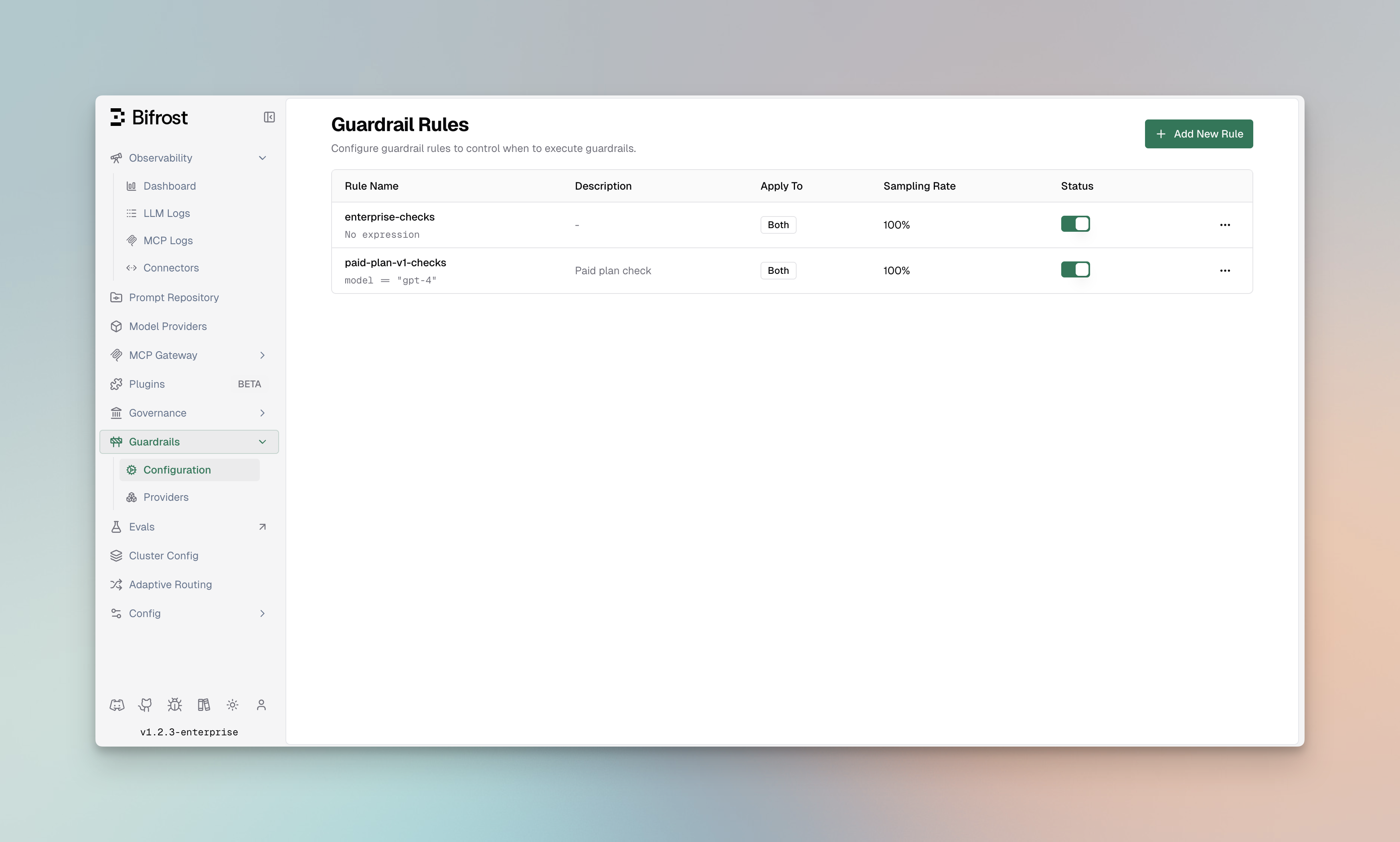

Navigating Guardrails in the UI Access Guardrails from the Bifrost dashboard:

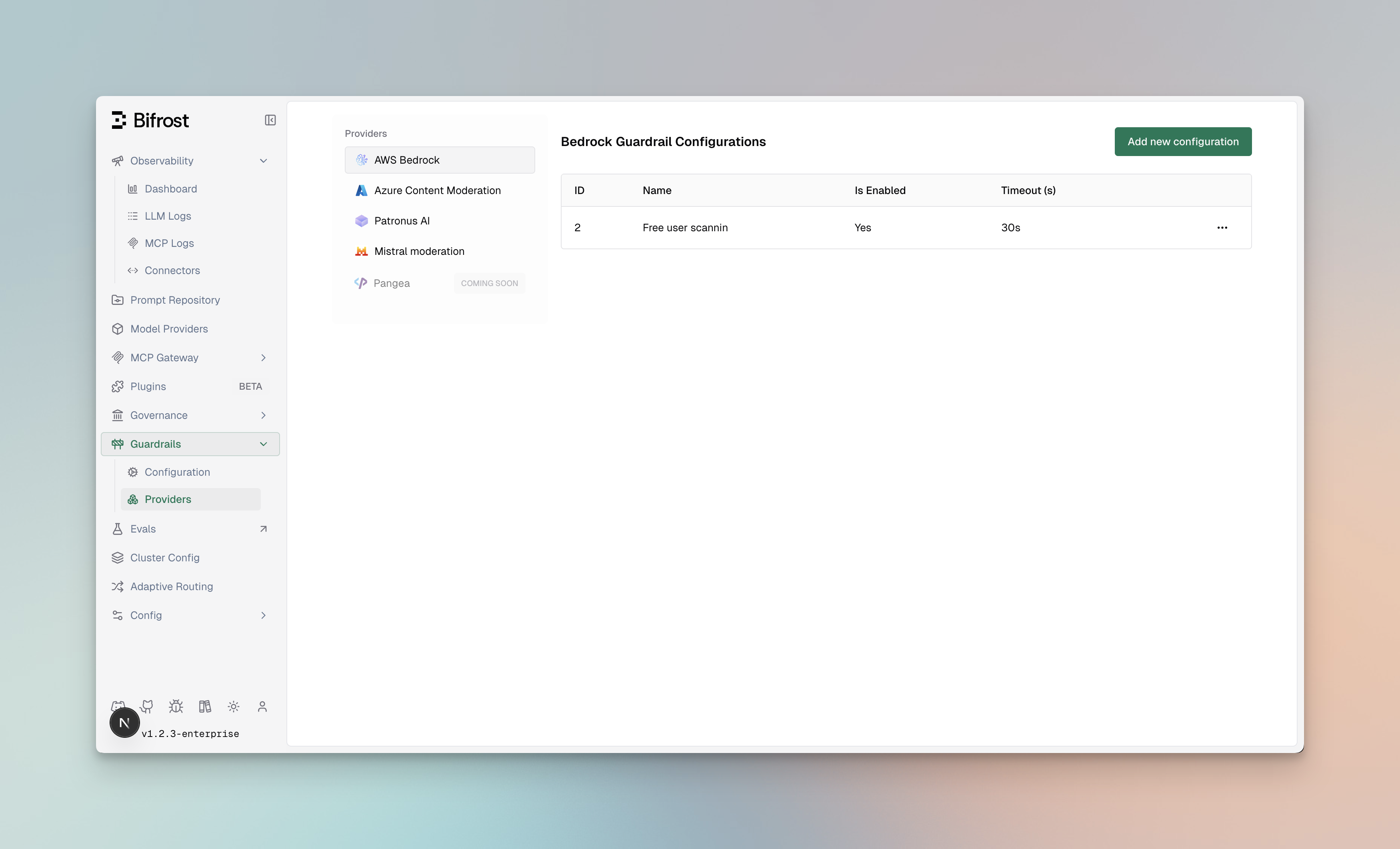

Page Path Description Configuration Guardrails > Configuration Manage guardrail rules and their settings Providers Guardrails > Providers Configure and manage guardrail profiles

Architecture The following diagram illustrates how Rules and Profiles work together to validate LLM requests:

Flow Description:

Incoming Request - LLM request arrives at BifrostInput Validation - Applicable rules evaluate the input using linked profilesLLM Processing - If input passes, request is forwarded to the LLM providerOutput Validation - Response is evaluated by output rules using linked profilesResponse - Validated response is returned (or blocked/modified based on violations)

Guardrail Rules Guardrail Rules are custom policies that define when and how content validation occurs. Rules use CEL (Common Expression Language) expressions to evaluate requests and can be linked to one or more profiles for execution.

Rule Properties Property Type Required Description idinteger Yes Unique identifier for the rule namestring Yes Descriptive name for the rule descriptionstring No Explanation of what the rule does enabledboolean Yes Whether the rule is active cel_expressionstring Yes CEL expression for rule evaluation apply_toenum Yes When to apply: input, output, or both sampling_rateinteger No Percentage of requests to evaluate (0-100) timeoutinteger No Execution timeout in milliseconds provider_config_idsarray No IDs of profiles to use for evaluation

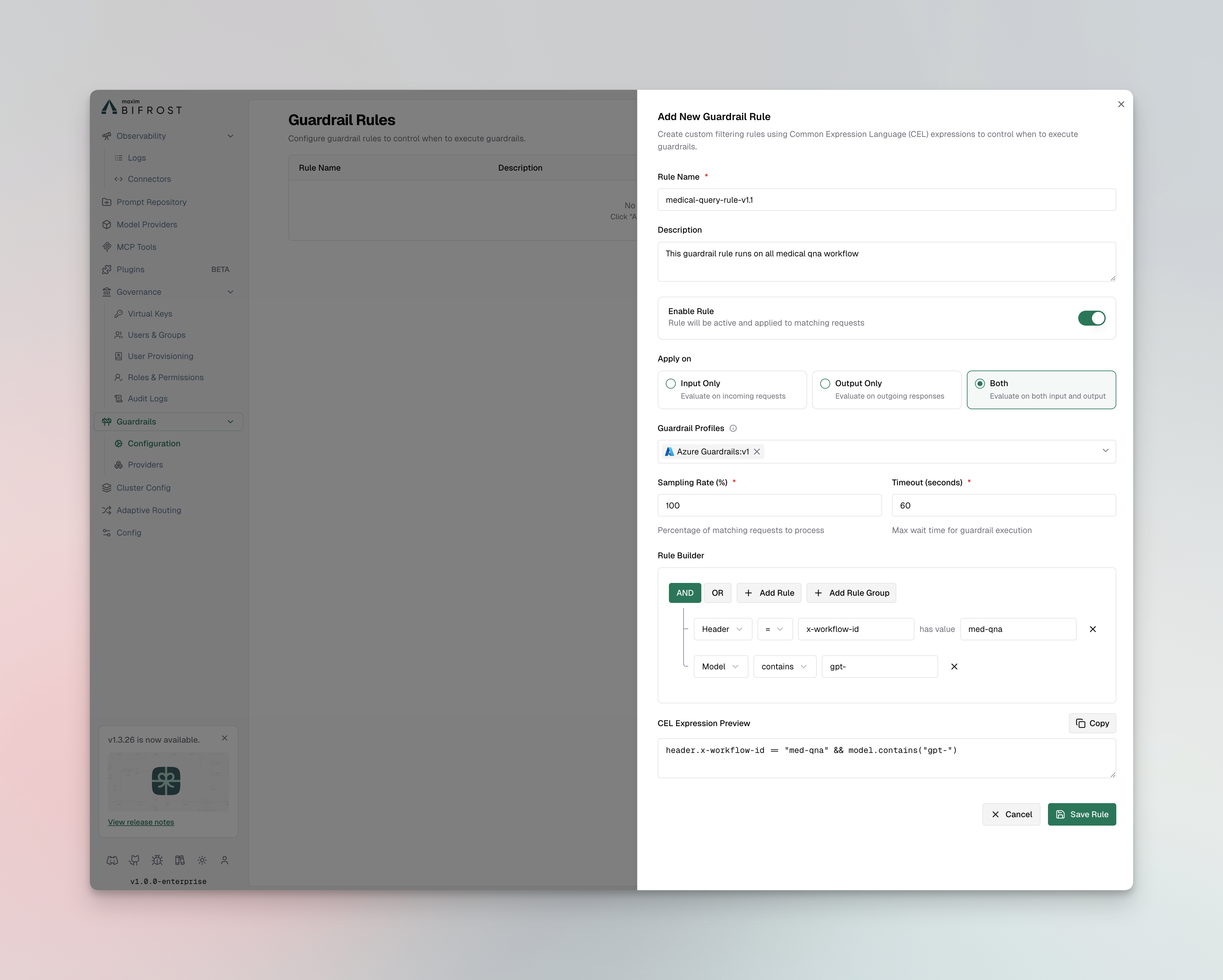

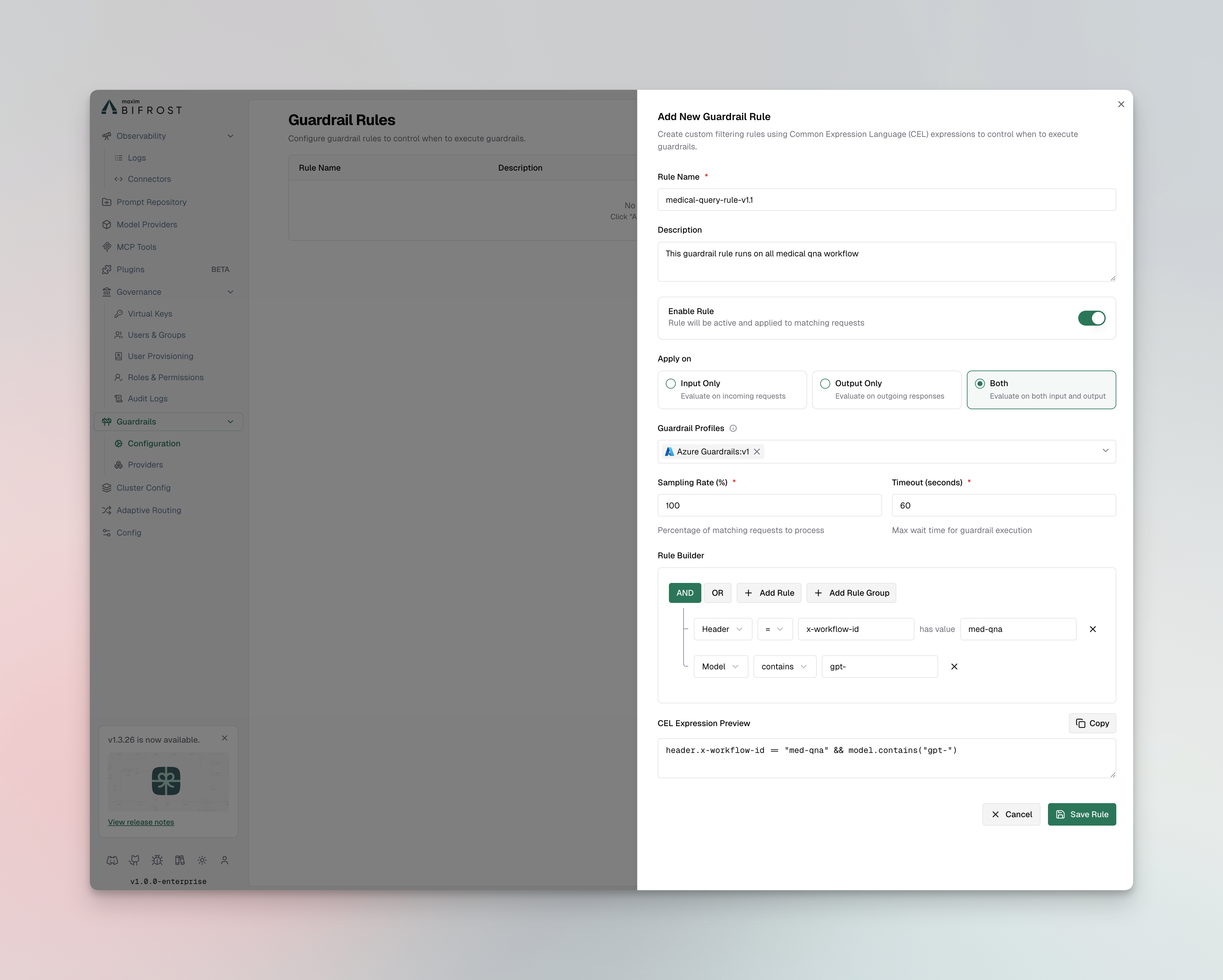

Creating Rules Web UI

API

config.json

Helm

Navigate to Rules

Go to Guardrails > Configuration

Click Add Rule

Configure Rule Settings Basic Information:

Name : Enter a descriptive name (e.g., “Block PII in Prompts”)Description : Explain the rule’s purposeEnabled : Toggle to activate the rule Evaluation Settings:

Apply To : Select when to apply the rule

input - Validate incoming prompts onlyoutput - Validate LLM responses onlyboth - Validate both inputs and outputs

CEL Expression : Define the validation logicSampling Rate : Set percentage of requests to evaluate (default: 100%)Timeout : Set maximum execution time in milliseconds

Link Profiles

Select one or more profiles to use for evaluation

Rules will execute all linked profiles in sequence

Save and Test

Click Save Rule

Use the Test button to validate with sample content

Create a Guardrail Rule: curl -X POST http://localhost:8080/api/enterprise/guardrails/rules \ -H "Content-Type: application/json" \ -d '{ "id": 1, "name": "Block PII in Prompts", "description": "Prevent PII from being sent to LLM providers", "enabled": true, "cel_expression": "request.messages.exists(m, m.role == \"user\")", "apply_to": "input", "sampling_rate": 100, "timeout": 5000, "provider_config_ids": [1, 2] }'

List All Rules: curl -X GET http://localhost:8080/api/enterprise/guardrails/rules \ -H "Content-Type: application/json" # Response { "rules" : [ { "id" : 1, "name" : "Block PII in Prompts", "description" : "Prevent PII from being sent to LLM providers", "enabled" : true , "cel_expression" : "request.messages.exists(m, m.role == \" user \" )", "apply_to" : "input", "sampling_rate" : 100, "timeout" : 5000, "provider_config_ids" : [1, 2] } ] }

Update a Rule: curl -X PUT http://localhost:8080/api/enterprise/guardrails/rules/1 \ -H "Content-Type: application/json" \ -d '{ "enabled": false, "sampling_rate": 50 }'

Delete a Rule: curl -X DELETE http://localhost:8080/api/enterprise/guardrails/rules/1

{ "guardrails_config" : { "guardrail_rules" : [ { "id" : 1 , "name" : "Block PII in Prompts" , "description" : "Prevent PII from being sent to LLM providers" , "enabled" : true , "cel_expression" : "request.messages.exists(m, m.role == \" user \" )" , "apply_to" : "input" , "sampling_rate" : 100 , "timeout" : 5000 , "provider_config_ids" : [ 1 , 2 ] }, { "id" : 2 , "name" : "Content Filter for Responses" , "description" : "Filter harmful content from LLM responses" , "enabled" : true , "cel_expression" : "true" , "apply_to" : "output" , "sampling_rate" : 100 , "timeout" : 3000 , "provider_config_ids" : [ 2 ] }, { "id" : 3 , "name" : "Prompt Injection Detection" , "description" : "Detect and block prompt injection attempts" , "enabled" : true , "cel_expression" : "request.messages.size() > 0" , "apply_to" : "input" , "sampling_rate" : 100 , "timeout" : 2000 , "provider_config_ids" : [ 1 ] } ] } }

guardrails_config : guardrail_rules : - id : 1 name : "Block PII in Prompts" description : "Prevent PII from being sent to LLM providers" enabled : true cel_expression : "request.messages.exists(m, m.role == 'user')" apply_to : "input" sampling_rate : 100 timeout : 5000 provider_config_ids : [ 1 , 2 ] - id : 2 name : "Content Filter for Responses" description : "Filter harmful content from LLM responses" enabled : true cel_expression : "true" apply_to : "output" sampling_rate : 100 timeout : 3000 provider_config_ids : [ 2 ]

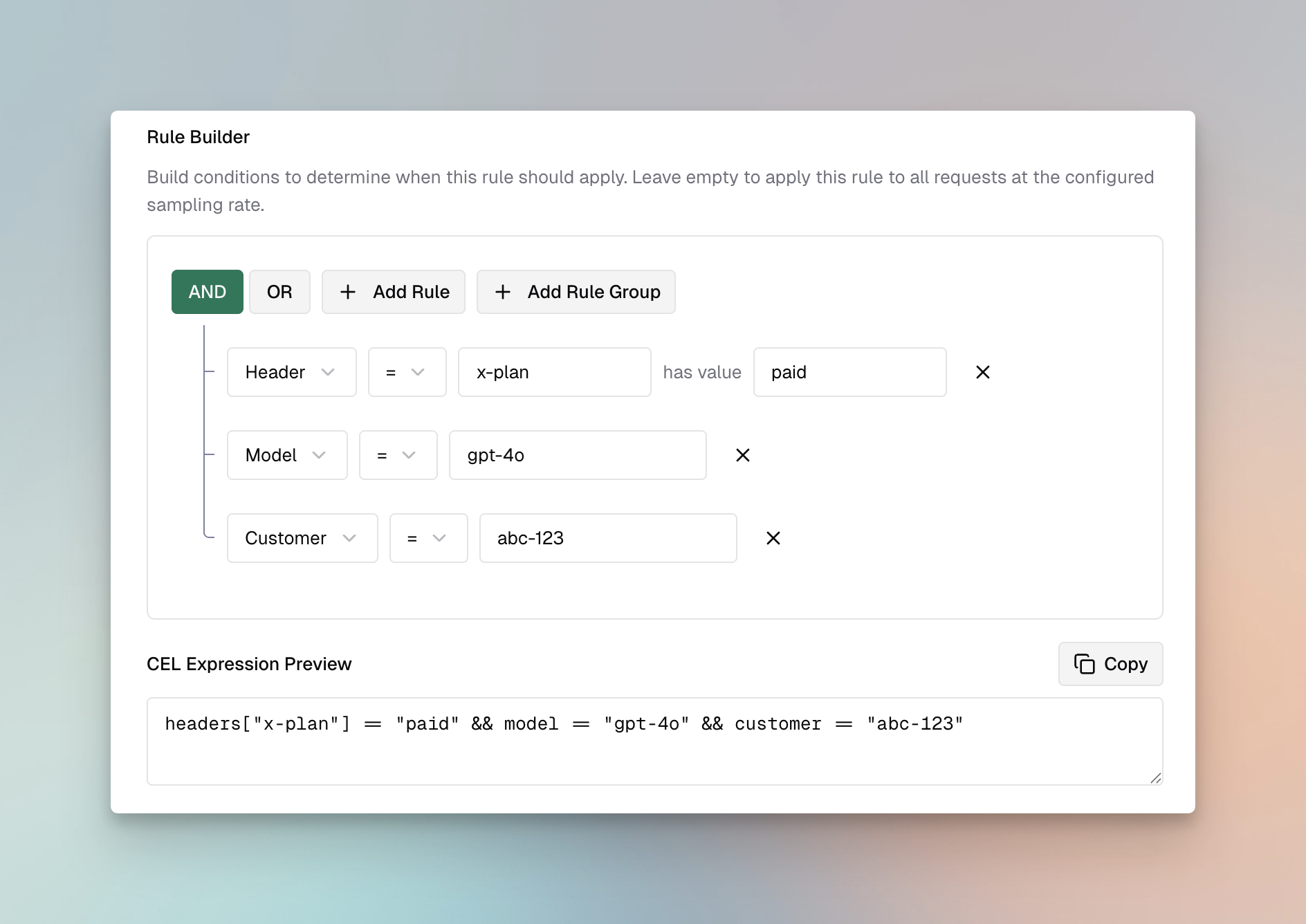

CEL Expression Examples CEL (Common Expression Language) provides a powerful way to define rule conditions. Here are common patterns:

Always Apply Rule: Apply to User Messages Only: request.messages.exists(m, m.role == "user")

Apply to Messages Containing Keywords: request.messages.exists(m, m.content.contains("confidential"))

Apply Based on Model: request.model.startsWith("gpt-4")

Apply to Long Prompts: request.messages.filter(m, m.role == "user").map(m, m.content.size()).sum() > 1000

Combine Multiple Conditions: request.model.startsWith("gpt-4") && request.messages.exists(m, m.role == "user" && m.content.size() > 500)

Linking Rules to Profiles Rules can be linked to multiple profiles for comprehensive validation:

Best Practices:

Link PII detection rules to profiles with PII capabilities (Bedrock, Patronus)

Link content filtering rules to profiles with content safety features (Azure, Bedrock, GraySwan)

Use GraySwan for custom natural language rules when you need flexible, readable policies

Use multiple profiles for defense-in-depth (e.g., Bedrock + Patronus for PII, Azure + GraySwan for content)

Set appropriate timeouts when using multiple profiles

Managing Profiles Profiles are reusable configurations for external guardrail providers. Each profile contains provider-specific settings including credentials, endpoints, and detection thresholds.

Profile Properties Property Type Required Description idinteger Yes Unique identifier for the profile provider_namestring Yes Provider type: bedrock, azure, grayswan, patronus_ai policy_namestring Yes Descriptive name for the policy enabledboolean Yes Whether the profile is active configobject No Provider-specific configuration

Creating Profiles Web UI

API

config.json

Helm

Navigate to Providers

Go to Guardrails > Providers

Click Add Profile

Select Provider Type

Choose from: AWS Bedrock, Azure Content Safety, GraySwan, or Patronus AI

Configure Provider Settings

Enter credentials and endpoint information

Configure detection thresholds and actions

See provider-specific setup sections above for detailed configuration

Save Profile

Click Save Profile

The profile is now available for linking to rules

Create a Profile: curl -X POST http://localhost:8080/api/enterprise/guardrails/providers \ -H "Content-Type: application/json" \ -d '{ "id": 1, "provider_name": "bedrock", "policy_name": "PII Detection Profile", "enabled": true, "config": { "access_key": "${AWS_ACCESS_KEY_ID}", "secret_key": "${AWS_SECRET_ACCESS_KEY}", "guardrail_arn": "arn:aws:bedrock:us-east-1:123456789:guardrail/abc123", "guardrail_version": "1", "region": "us-east-1" } }'

List All Profiles: curl -X GET http://localhost:8080/api/enterprise/guardrails/providers \ -H "Content-Type: application/json" # Response { "providers" : [ { "id" : 1, "provider_name" : "bedrock", "policy_name" : "PII Detection Profile", "enabled" : true }, { "id" : 2, "provider_name" : "azure", "policy_name" : "Content Safety Profile", "enabled" : true } ] }

Update a Profile: curl -X PUT http://localhost:8080/api/enterprise/guardrails/providers/1 \ -H "Content-Type: application/json" \ -d '{ "enabled": false }'

Delete a Profile: curl -X DELETE http://localhost:8080/api/enterprise/guardrails/providers/1

{ "guardrails_config" : { "guardrail_providers" : [ { "id" : 1 , "provider_name" : "bedrock" , "policy_name" : "PII Detection Profile" , "enabled" : true , "config" : { "access_key" : "${AWS_ACCESS_KEY_ID}" , "secret_key" : "${AWS_SECRET_ACCESS_KEY}" , "guardrail_arn" : "arn:aws:bedrock:us-east-1:123456789:guardrail/abc123" , "guardrail_version" : "1" , "region" : "us-east-1" } }, { "id" : 2 , "provider_name" : "azure" , "policy_name" : "Content Safety Profile" , "enabled" : true , "config" : { "endpoint" : "https://your-resource.cognitiveservices.azure.com/" , "api_key" : "${AZURE_CONTENT_SAFETY_API_KEY}" , "analyze_enabled" : true , "analyze_severity_threshold" : "medium" , "jailbreak_shield_enabled" : true , "indirect_attack_shield_enabled" : true } }, { "id" : 3 , "provider_name" : "grayswan" , "policy_name" : "Custom Safety Rules" , "enabled" : true , "config" : { "api_key" : "${GRAYSWAN_API_KEY}" , "violation_threshold" : 0.5 , "reasoning_mode" : "hybrid" , "rules" : { "no_pii" : "Do not allow personally identifiable information" , "professional_tone" : "Ensure responses maintain a professional tone" } } }, { "id" : 4 , "provider_name" : "patronus_ai" , "policy_name" : "Hallucination Detection" , "enabled" : true , "config" : { "api_key" : "${PATRONUS_API_KEY}" , "api_endpoint" : "https://api.patronus.ai/v1" } } ] } }

guardrails_config : guardrail_providers : - id : 1 provider_name : "bedrock" policy_name : "PII Detection Profile" enabled : true config : guardrail_arn : "arn:aws:bedrock:us-east-1:123456789:guardrail/abc123" guardrail_version : "1" region : "us-east-1" # AWS Authentication (choose one method): # Option 1: Explicit credentials access_key : "${AWS_ACCESS_KEY_ID}" secret_key : "${AWS_SECRET_ACCESS_KEY}" # Option 2: IAM Role - omit access_key and secret_key # (Bifrost will use IAM credentials from the environment) - id : 2 provider_name : "azure" policy_name : "Content Safety Profile" enabled : true config : endpoint : "https://your-resource.cognitiveservices.azure.com/" api_key : "${AZURE_CONTENT_SAFETY_API_KEY}" analyze_enabled : true analyze_severity_threshold : "medium" jailbreak_shield_enabled : true - id : 3 provider_name : "grayswan" policy_name : "Custom Safety Rules" enabled : true config : api_key : "${GRAYSWAN_API_KEY}" violation_threshold : 0.5 reasoning_mode : "hybrid" rules : no_pii : "Do not allow personally identifiable information" professional_tone : "Ensure responses maintain a professional tone" - id : 4 provider_name : "patronus_ai" policy_name : "Hallucination Detection" enabled : true config : api_endpoint : "https://api.patronus.ai/v1"

Provider Capabilities Each provider offers different capabilities. Choose profiles based on your validation needs:

Capability AWS Bedrock Azure Content Safety GraySwan Patronus AI PII Detection Yes No No Yes Content Filtering Yes Yes Yes Yes Prompt Injection Yes Yes Yes Yes Hallucination Detection No No No Yes Toxicity Screening Yes Yes Yes Yes Custom Policies Yes Yes Yes Yes Custom Natural Language Rules No No Yes No Image Support Yes No No No IPI Detection No Yes Yes No Mutation Detection No No Yes No

Best Practices Profile Organization:

Create separate profiles for different use cases (PII, content filtering, etc.)

Use descriptive policy names that indicate the profile’s purpose

Keep credentials secure using environment variables

Performance Considerations:

Enable only the profiles you need to minimize latency

Use sampling rates on rules for high-traffic endpoints

Set appropriate timeouts to prevent slow requests

Security:

Store API keys and credentials in environment variables or secrets managers

Regularly rotate credentials

Use least-privilege IAM roles for AWS Bedrock

Using Guardrails in Requests Attaching Guardrails to API Calls Once configured, attach guardrails to your LLM requests using custom headers:

Single Guardrail: curl -X POST http://localhost:8080/v1/chat/completions \ -H "Content-Type: application/json" \ -H "x-bf-guardrail-id: bedrock-prod-guardrail" \ -d '{ "model": "gpt-4o-mini", "messages": [ { "role": "user", "content": "Help me with this task" } ] }'

Multiple Guardrails (Sequential): curl -X POST http://localhost:8080/v1/chat/completions \ -H "Content-Type: application/json" \ -H "x-bf-guardrail-ids: bedrock-prod-guardrail,azure-content-safety-001" \ -d '{ "model": "gpt-4o-mini", "messages": [ { "role": "user", "content": "Help me with this task" } ] }'

Guardrail Configuration in Request: curl -X POST http://localhost:8080/v1/chat/completions \ -H "Content-Type: application/json" \ -d '{ "model": "gpt-4o-mini", "messages": [ { "role": "user", "content": "Help me with this task" } ], "bifrost_config": { "guardrails": { "input": ["bedrock-prod-guardrail"], "output": ["patronus-ai-001"], "async": false } } }'

Guardrail Response Handling Successful Validation (200): { "id" : "chatcmpl-abc123" , "object" : "chat.completion" , "created" : 1699564800 , "model" : "gpt-4o-mini" , "choices" : [ { "index" : 0 , "message" : { "role" : "assistant" , "content" : "I'd be happy to help you with your task..." }, "finish_reason" : "stop" } ], "extra_fields" : { "guardrails" : { "input_validation" : { "guardrail_id" : "bedrock-prod-guardrail" , "status" : "passed" , "violations" : [], "processing_time_ms" : 245 }, "output_validation" : { "guardrail_id" : "patronus-ai-001" , "status" : "passed" , "violations" : [], "processing_time_ms" : 312 } } } }

Validation Failure - Blocked (446): { "error" : { "message" : "Request blocked by guardrails" , "type" : "guardrail_violation" , "code" : 446 , "details" : { "guardrail_id" : "bedrock-prod-guardrail" , "validation_stage" : "input" , "violations" : [ { "type" : "PII" , "category" : "SSN" , "severity" : "HIGH" , "action" : "block" , "text_excerpt" : "My SSN is ***-**-****" }, { "type" : "prompt_injection" , "severity" : "CRITICAL" , "action" : "block" , "confidence" : 0.95 } ], "processing_time_ms" : 198 } } }

Validation Warning - Logged (246): { "id" : "chatcmpl-def456" , "object" : "chat.completion" , "created" : 1699564800 , "model" : "gpt-4o-mini" , "choices" : [ { "index" : 0 , "message" : { "role" : "assistant" , "content" : "Response with redacted content..." }, "finish_reason" : "stop" } ], "bifrost_metadata" : { "guardrails" : { "output_validation" : { "guardrail_id" : "azure-content-safety-001" , "status" : "warning" , "violations" : [ { "type" : "profanity" , "severity" : "LOW" , "action" : "redact" , "modifications" : 2 } ], "processing_time_ms" : 187 } } } }