Documentation Index

Fetch the complete documentation index at: https://docs.getbifrost.ai/llms.txt

Use this file to discover all available pages before exploring further.

Enable AI models to use external functions by defining tool schemas using OpenAI format. Models can then call these functions automatically based on user requests.

curl --location 'http://localhost:8080/v1/chat/completions' \

--header 'Content-Type: application/json' \

--data '{

"model": "openai/gpt-4o-mini",

"messages": [

{"role": "user", "content": "What is 15 + 27? Use the calculator tool."}

],

"tools": [

{

"type": "function",

"function": {

"name": "calculator",

"description": "A calculator tool for basic arithmetic operations",

"parameters": {

"type": "object",

"properties": {

"operation": {

"type": "string",

"description": "The operation to perform",

"enum": ["add", "subtract", "multiply", "divide"]

},

"a": {

"type": "number",

"description": "The first number"

},

"b": {

"type": "number",

"description": "The second number"

}

},

"required": ["operation", "a", "b"]

}

}

}

],

"tool_choice": "auto"

}'

{

"choices": [{

"message": {

"role": "assistant",

"tool_calls": [{

"id": "call_abc123",

"type": "function",

"function": {

"name": "calculator",

"arguments": "{\"operation\":\"add\",\"a\":15,\"b\":27}"

}

}]

}

}]

}

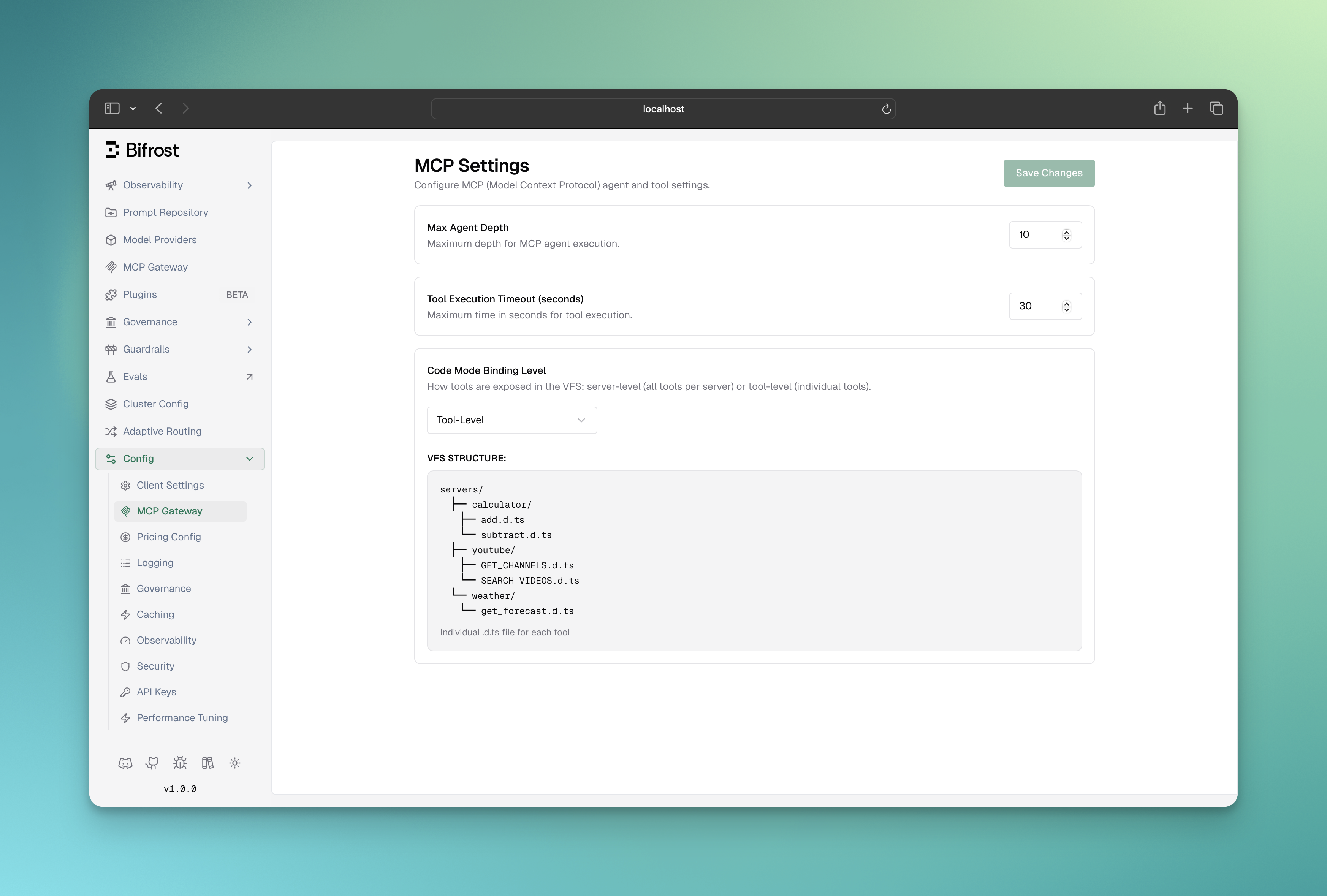

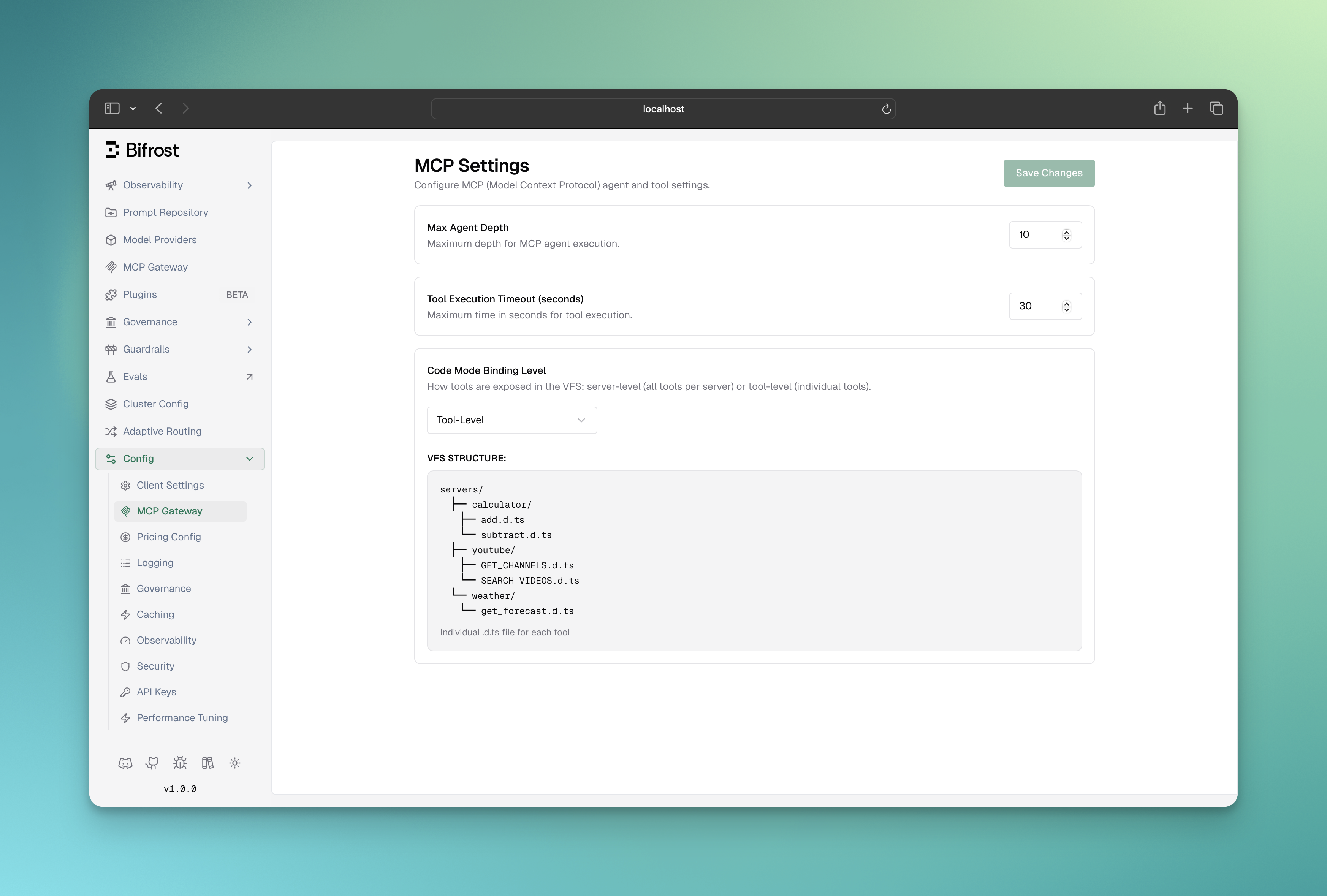

Connecting to MCP Servers

Connect to Model Context Protocol (MCP) servers to give AI models access to external tools and services without manually defining each function.

Using Web UI

Using API

Using config.json

- Go to http://localhost:8080

- Navigate to “MCP Clients” in the sidebar

- Click “Add MCP Client”

- Enter server details and save

curl --location 'http://localhost:8080/api/mcp/client' \

--header 'Content-Type: application/json' \

--data '{

"name": "filesystem",

"connection_type": "stdio",

"stdio_config": {

"command": ["npx", "@modelcontextprotocol/server-filesystem", "/tmp"],

"args": []

}

}'

curl --location 'http://localhost:8080/api/mcp/clients'

{

"mcp": {

"client_configs": [

{

"name": "filesystem",

"connection_type": "stdio",

"stdio_config": {

"command": ["npx", "@modelcontextprotocol/server-filesystem", "/tmp"],

"args": []

}

},

{

"name": "youtube-search",

"connection_type": "http",

"connection_string": "http://your-youtube-mcp-url"

}

]

}

}

# Force use of specific tool

"tool_choice": {

"type": "function",

"function": {"name": "calculator"}

}

# Let AI decide automatically (default)

"tool_choice": "auto"

# Disable tool usage

"tool_choice": "none"

Next Steps

Now that you understand tool calling, explore these related topics:

Essential Topics

Advanced Topics