If your Allowed Headers are already set to

*, you can skip this note. If not and you face issues integrating Bifrost with Zed, try switching to * or adding the specific headers required by your client. By default, Bifrost whitelists: Content-Type, Authorization, X-Requested-With, X-Stainless-Timeout, and X-Api-Key.Setup

1. Configure Bifrost Provider

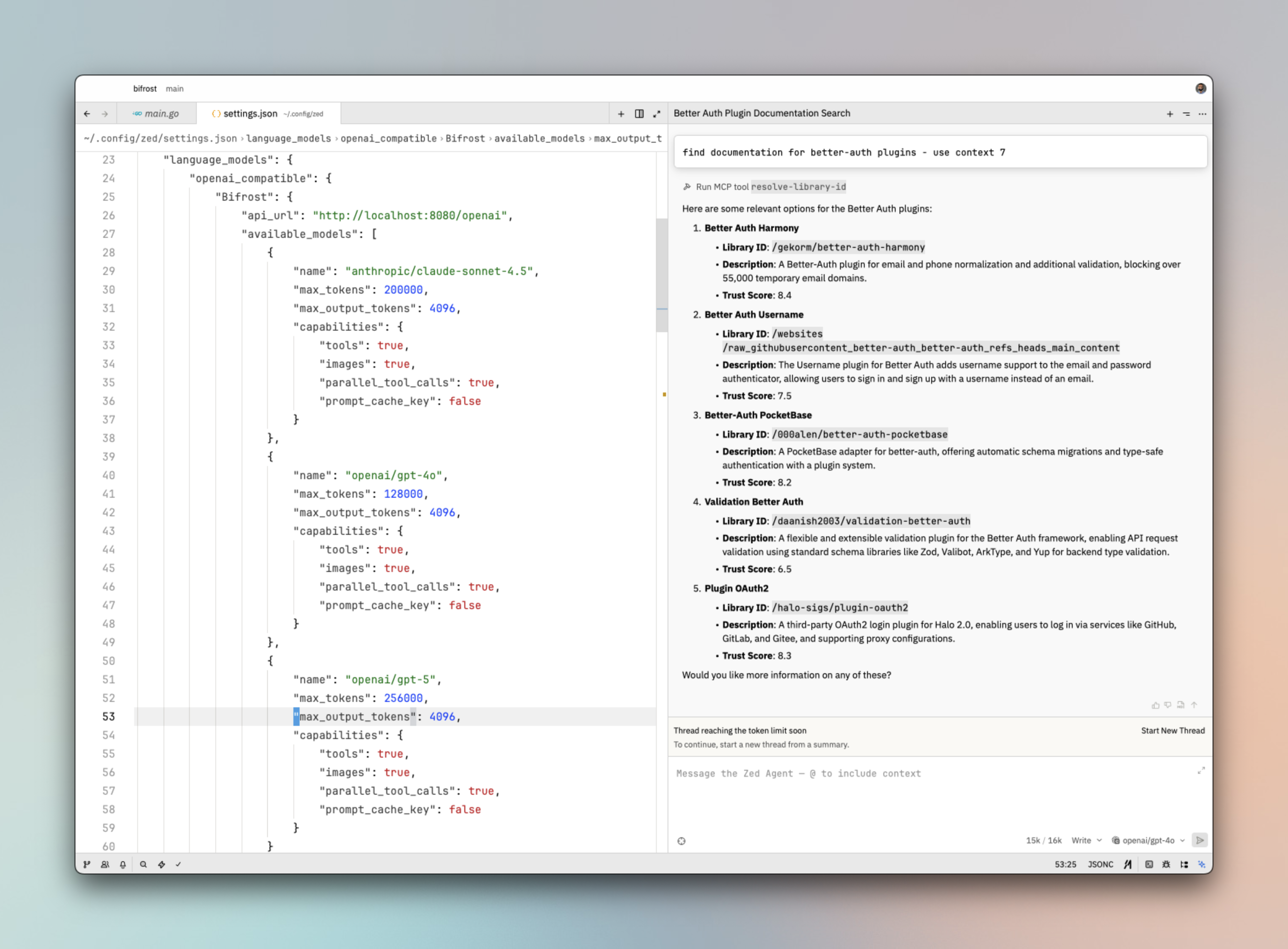

Add Bifrost to Zed’slanguage_models.openai_compatible configuration. This is typically in your Zed settings (JSON) or workspace config.

http://localhost:8080/openai with your Bifrost gateway URL + /openai.

2. Model Capabilities

| Field | Description |

|---|---|

tools | Enable tool/function calling |

images | Enable image input (vision) |

parallel_tool_calls | Support multiple tool calls in one response |

prompt_cache_key | Enable prompt caching (set false if not supported) |

provider/model format (e.g. openai/gpt-5, anthropic/claude-sonnet-4.5). Ensure these models are configured in Bifrost.

3. Reload Workspace

After changing the configuration, reload the workspace so Zed recognizes and reloads the provider list.Virtual Keys

When Bifrost has virtual key authentication enabled, add anapi_key field to the Bifrost provider config (check Zed’s documentation for the exact field name — it may vary by version):

Model Selection

Zed lets you assign models to different AI features. Use Bifrost model IDs inprovider/model format to access any configured provider:

- Use powerful models like

openai/gpt-5oranthropic/claude-sonnet-4-5-20250929for complex code generation and refactoring - Use fast models like

groq/llama-3.3-70b-versatilefor quick completions and inline suggestions

Using Multiple Providers

Bifrost routes requests to the correct provider based on the model name. Use theprovider/model-name format to access any configured provider through the single OpenAI-compatible endpoint:

Supported Providers

Bifrost supports the following providers with theprovider/model-name format:

openai, azure, gemini, vertex, bedrock, mistral, groq, cerebras, cohere, perplexity, xai, ollama, openrouter, huggingface, nebius, parasail, replicate, vllm, sgl

Zed connects to Bifrost via a single OpenAI-compatible endpoint. Bifrost handles routing to the correct provider based on the model name — no per-provider configuration needed.

Observability

All Zed requests through Bifrost are logged. Monitor them athttp://localhost:8080/logs — filter by provider, model, or search through conversation content to track usage.

Next Steps

- Provider Configuration — Configure AI providers in Bifrost

- Virtual Keys — Set up usage limits and access control