Documentation Index

Fetch the complete documentation index at: https://docs.getbifrost.ai/llms.txt

Use this file to discover all available pages before exploring further.

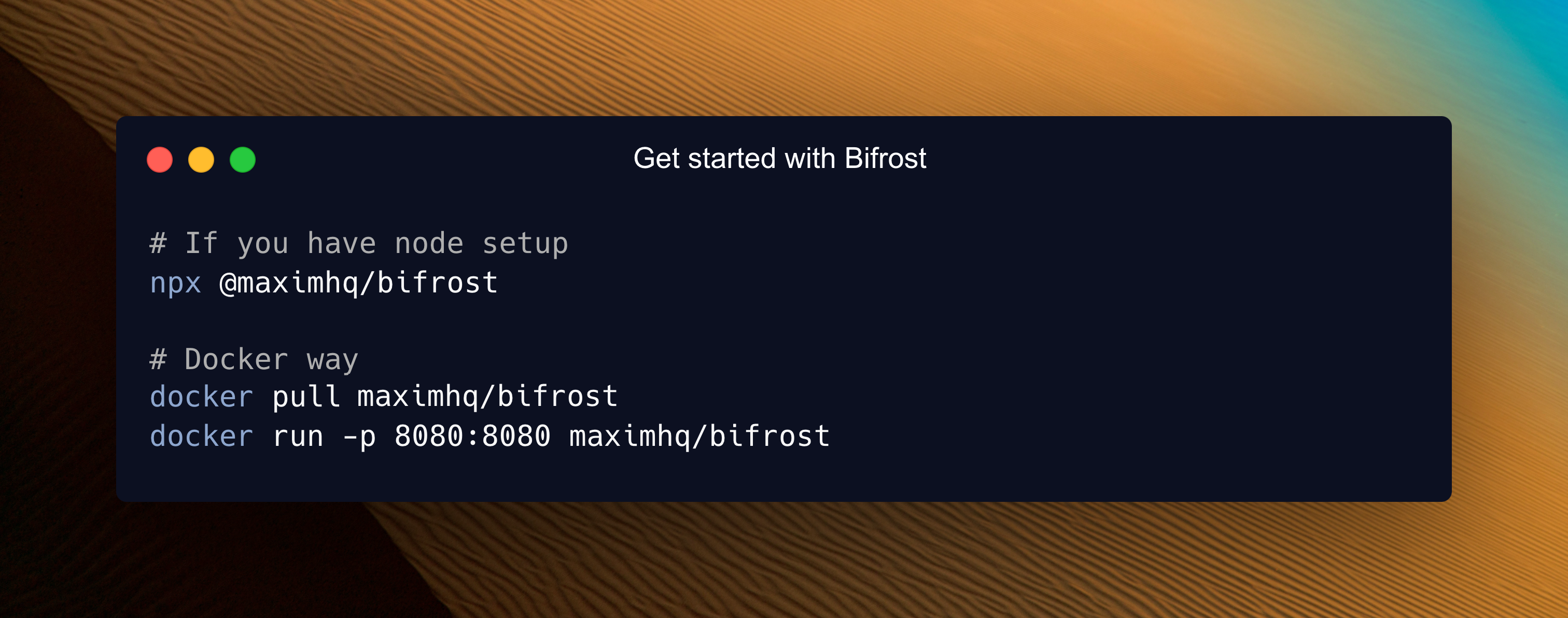

30-Second Setup

Get Bifrost running as a blazing-fast HTTP API gateway with zero configuration. Connect to any AI provider (OpenAI, Anthropic, Bedrock, and more) through a unified API that follows OpenAI request/response format.1. Choose Your Setup Method

Both options work perfectly - choose what fits your workflow:NPX Binary

Docker

2. Configuration Flags

| Flag | Default | NPX | Docker | Description |

|---|---|---|---|---|

| port | 8080 | -port 8080 | -e APP_PORT=8080 -p 8080:8080 | HTTP server port |

| host | localhost | -host 0.0.0.0 | -e APP_HOST=0.0.0.0 | Host to bind server to |

| log-level | info | -log-level info | -e LOG_LEVEL=info | Log level (debug, info, warn, error) |

| log-style | json | -log-style json | -e LOG_STYLE=json | Log style (pretty, json) |

-app-dir flag determines where Bifrost stores all its data:

config.json- Configuration file (optional)config.db- SQLite database for UI configurationlogs.db- Request logs database

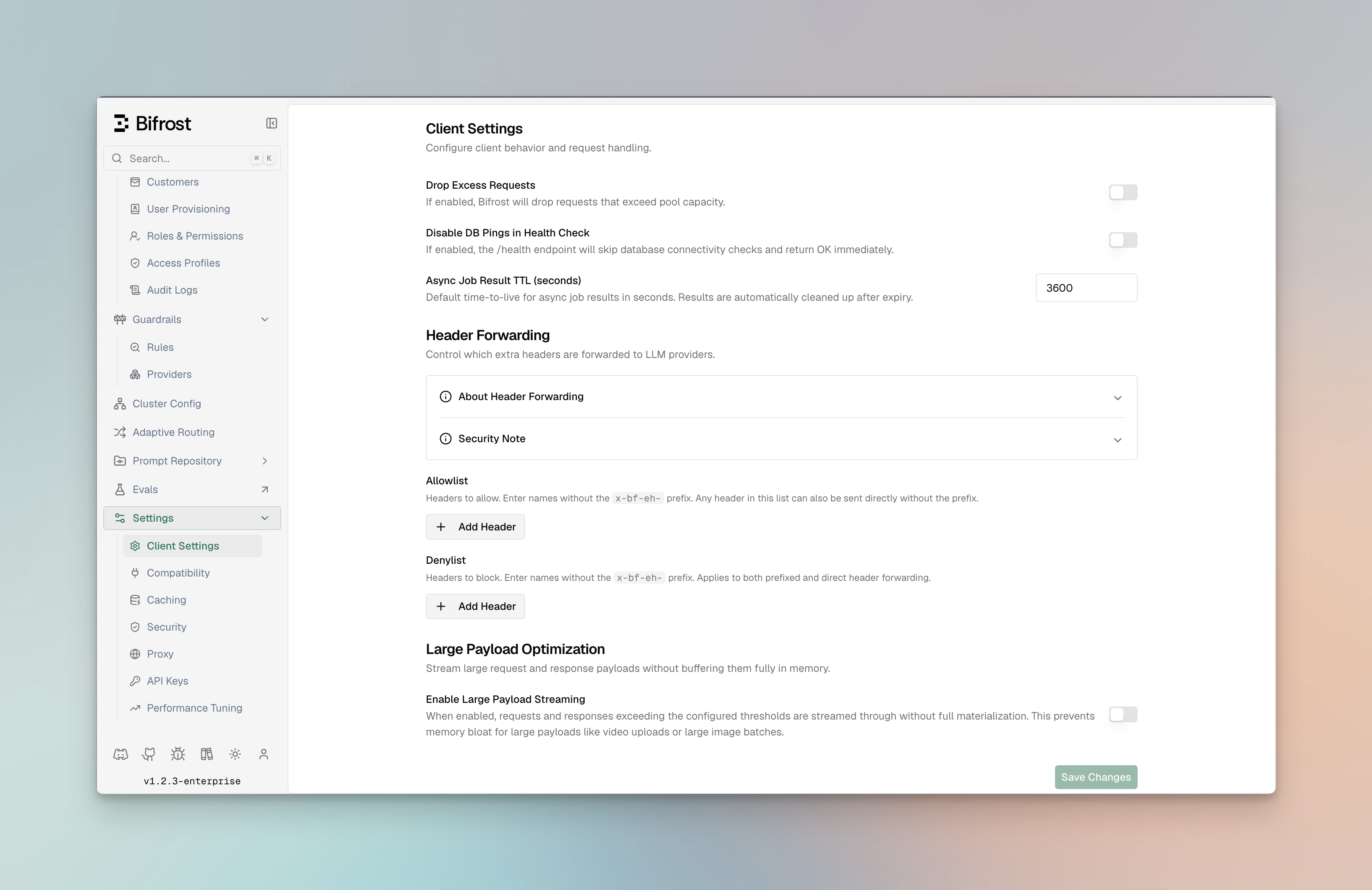

3. Open the Web Interface

Navigate to http://localhost:8080 in your browser:- Visual provider setup - Add API keys with clicks, not code

- Real-time configuration - Changes apply immediately

- Live monitoring - Request logs, metrics, and analytics

- Governance management - Virtual keys, usage budgets, and more

4. Test Your First API Call

What Just Happened?

- Zero Configuration Start: Bifrost launched without any config files - everything can be configured through the Web UI or API

- OpenAI-Compatible API: All Bifrost APIs follow OpenAI request/response format for seamless integration

- Unified API Endpoint:

/v1/chat/completionsworks with any provider (OpenAI, Anthropic, Bedrock, etc.) - Provider Resolution:

openai/gpt-4o-minitells Bifrost to use OpenAI’s GPT-4o Mini model. You can also use bare model names likegpt-4o-mini, Bifrost will automatically resolve the provider via the Model Catalog - Automatic Routing: Bifrost handles authentication, rate limiting, and request routing automatically

Two Configuration Modes

Bifrost supports two configuration approaches - you cannot use both simultaneously:Mode 1: Web UI Configuration

- No

config.jsonfile exists (Bifrost auto-creates SQLite database) config.jsonexists withconfig_storeconfigured

Mode 2: File-based Configuration

You can view entire config schema here

config.json in your app directory:

config_store in config.json:

- UI is disabled - no real-time configuration possible

- Read-only mode -

config.jsonis never modified - Memory-only - all configurations loaded into memory at startup

- Restart required - changes to

config.jsononly apply after restart

config_store in config.json:

- UI is enabled - full real-time configuration via web interface

- Database check - Bifrost checks if config store database exists and has data

- Empty DB: Bootstraps database with

config.jsonsettings, then uses DB exclusively - Existing DB: Uses database directly, ignores

config.jsonconfigurations

- Empty DB: Bootstraps database with

- Persistent storage - all changes saved to database immediately

config.json after initial bootstrap has no effect when config_store is enabled. Use the public HTTP APIs to make configuration changes instead.

The Three Stores Explained:

- Config Store: Stores provider configs, API keys, MCP settings - Required for UI functionality

- Logs Store: Stores request logs shown in UI - Optional, can be disabled

- Vector Store: Used for semantic caching - Optional, can be disabled

PostgreSQL UTF8 Requirement

The minimum PostgreSQL version required is 16 or above.

For the log store, Bifrost creates materialized views to improve analytics

performance. Ensure that the PostgreSQL user has the necessary permissions to

perform these operations on the target schema.

config_store or logs_store, the target database must use UTF8 encoding.

Use template0 when creating the database so PostgreSQL applies UTF8 and locale settings explicitly:

en_US.UTF-8, C.UTF-8, or another installed UTF-8 locale).

Verify the database encoding:

Next Steps

Now that you have Bifrost running, explore these focused guides:Essential Topics

- Provider Configuration - Multiple providers, automatic failovers & load balancing

- Integrations - Drop-in replacements for OpenAI, Anthropic, and GenAI SDKs

- Multimodal Support - Support for text, images, audio, and streaming, all behind a common interface.

Advanced Topics

- Tracing - Logging requests for monitoring and debugging

- MCP Tools - Enable AI models to use external tools (filesystem, web search, databases)

- Governance - Usage tracking, rate limiting, and cost control

- Deployment - Production setup and scaling

Happy building with Bifrost! 🚀