Documentation Index

Fetch the complete documentation index at: https://docs.getbifrost.ai/llms.txt

Use this file to discover all available pages before exploring further.

Overview

Bifrost provides two complementary layers of resilience:- Retries - When a provider returns a transient server error (network issue, 5xx) or a per-key failure (

429rate-limit,401/403auth,402billing), Bifrost automatically retries the same request against the same provider. Transient-server retries reuse the same key with exponential backoff; per-key failures rotate to a different API key from your pool. Backoff is skipped only when rotating away from a permanent per-key failure (401/402/403) where waiting offers nothing — for429rotations a backoff is still applied to let account-level quota windows slide. - Fallbacks - When the primary provider fails after exhausting all retries, Bifrost moves on to the next provider in your fallback chain. Each fallback provider gets its own full retry budget.

Retries

How retries work

When a request fails with a retryable error, Bifrost:- Classifies the failure as either a per-key failure (the credential / account is the problem — status

401/402/403/429) or a transient server failure (the upstream is the problem —5xx/ network / DNS). - On per-key failures, rotates to a different API key from the pool (if multiple keys are configured). Two sub-cases:

- Permanent per-key failure (

401/402/403): mark the key dead for the remainder of the request and rotate immediately — no backoff, since waiting can’t revive a bad credential. - Transient per-key failure (

429rate-limit): mark the key as used-this-cycle and rotate, but still apply backoff — providers often enforce account-level quotas shared across keys, so the new key may not have fresh capacity until the window slides.

- Permanent per-key failure (

- On transient server failures (

5xx, DNS, connection refused): reuse the same key and wait using exponential backoff with jitter before the next attempt. - Continues until the request succeeds,

max_retriesis exhausted, or every key is permanently dead (in which case Bifrost returns502 upstream_credentials_exhaustedrather than the raw4xx, to make it clear the caller’s Bifrost API key is fine — the configured provider credentials are not).

Backoff formula

Backoff applies to same-key retries (transient server 5xx / network errors) and to429 rate-limit rotations (since account-level quotas can be shared across keys). It is skipped only when rotating away from a permanent per-key failure (401/402/403) to a genuinely different credential — a dead key gains nothing from waiting.

retry_backoff_initial = 500ms and retry_backoff_max = 5000ms:

| Attempt | Base backoff | With jitter (approx.) |

|---|---|---|

| 1st retry | 500 ms | 400–600 ms |

| 2nd retry | 1000 ms | 800 ms–1.2 s |

| 3rd retry | 2000 ms | 1.6–2.4 s |

| 4th retry | 4000 ms | 3.2–4.8 s |

| 5th+ retry | 5000 ms (capped) | 4–5 s |

What triggers a retry

| Condition | Retried? | Key rotation? | Backoff before next attempt? |

|---|---|---|---|

| Network error (DNS, connection refused) | Yes | No - same key reused | Yes |

5xx server errors (500, 502, 503, 504) | Yes | No - same key reused | Yes |

Rate limit (429 or rate-limit message pattern) | Yes | Yes - rate-limited key may be retried later in the cycle | Yes - account-level quotas may be shared across keys |

Auth failure (401, 403) | Yes | Yes - failing key marked permanently dead for this request | No - waiting can’t revive a bad credential |

Billing failure (402) | Yes | Yes - failing key marked permanently dead for this request | No - waiting can’t revive a bad credential |

Request validation error (400/404/422/…) | No | - | - |

| Plugin-enforced block | No | - | - |

| Cancelled request | No | - | - |

Configuring retries

Retries are configured per-provider innetwork_config. The defaults are max_retries: 0 (no retries), retry_backoff_initial: 500 ms, and retry_backoff_max: 5000 ms.

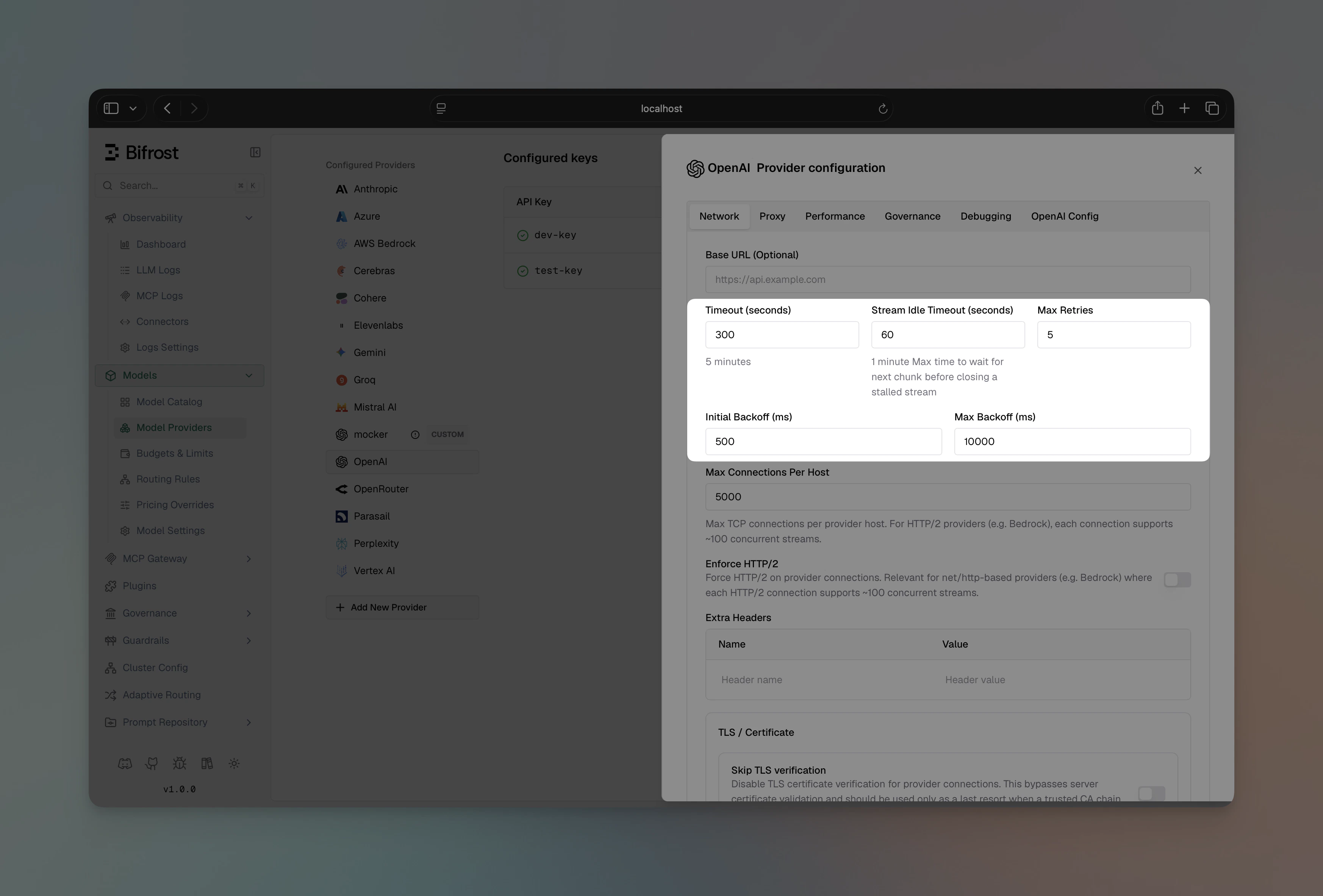

- Web UI

- API

- Go SDK

- config.json

- Max Retries - number of additional attempts after the first failure (e.g.

3) - Retry Backoff Initial - starting backoff in milliseconds (e.g.

500) - Retry Backoff Max - maximum backoff cap in milliseconds (e.g.

5000)

Key rotation on per-key failures

Key rotation on retries requires v1.5.0-prerelease4 or later. Rotation on auth (401/403) and billing (402) errors (in addition to rate limits) requires the retry-logic-enhancements release.

429 Too Many Requests— this key is rate-limited; another may have spare quota.401 Unauthorized/403 Forbidden— bad / revoked key, or key lacks permission.402 Payment Required— billing issue on this key’s account.

used set. Once all keys in the pool have been tried, Bifrost resets that set and starts a fresh weighted round — a previously rate-limited key may have free quota by then. With 3 keys and max_retries: 5, Bifrost can cycle through all three keys twice before giving up.

Auth and billing failures (401/402/403) are different: the failing key is marked permanently dead for the remainder of the request and is never reset. A bad credential won’t become valid by waiting. If every configured key ends up permanently dead, Bifrost returns 502 upstream_credentials_exhausted and skips any remaining retries.

Key rotation on retries only applies when

max_retries > 0 and more than one key is configured for the provider. With a single key, all retries reuse that key (and a permanent per-key failure terminates immediately with 502).Fallbacks

Fallbacks provide automatic failover to a different provider when the primary fails after exhausting all its retries. Each fallback is tried in order until one succeeds.How fallbacks work

- Primary attempt: Tries your configured provider with its full retry budget

- Fallback decision: If the primary fails (and the error is retryable at the provider level), Bifrost moves to the first fallback

- Sequential fallbacks: Each fallback provider also gets its own full retry budget

- First success wins: Returns the response from the first provider that succeeds

- All fail: Returns the original error from the primary provider. Exception: if a plugin on a fallback provider sets

AllowFallbacks = falseon the error (e.g. a security or compliance plugin that should halt the chain regardless of remaining fallbacks), Bifrost stops immediately and returns that fallback’s error rather than continuing to the next provider or returning the primary error.

Implementation

- Gateway

- Go SDK

Pass a The response

fallbacks array in the request body. Each entry specifies a provider/model string:extra_fields.provider tells you which provider actually served the request:How retries and fallbacks work together

The two mechanisms form a nested resilience loop. Retries run inside each provider attempt; fallbacks run across providers once retries are exhausted. Key point: each provider in the chain - primary and every fallback - gets its own fullmax_retries budget. A primary configured with max_retries: 3 and two fallbacks each also configured with max_retries: 3 means up to 12 total attempts before giving up.

The retry budget is set per-provider in

network_config. If your fallback providers have different retry configurations, each will use their own settings.Real-world scenarios

Scenario 1: Rate limiting with key rotation OpenAI key 1 hits its rate limit. Bifrost rotates to key 2 on the next retry - no fallback needed, the request succeeds within the same provider. Scenario 2: Provider outage OpenAI is experiencing downtime (returning503). Bifrost retries with the same key (transient server issue), exhausts max_retries, then fails over to Anthropic. Anthropic succeeds on the first attempt.

Scenario 3: Cascading failure

Both primary and first fallback are down. Bifrost works through each provider’s retry budget sequentially until the second fallback succeeds.

Scenario 4: Cost-sensitive fallback

Primary: a premium model for quality. Fallback: a cost-effective alternative. Governance rules can trigger a budget-exceeded error on the primary, which cascades into the fallback chain.

Scenario 5: Revoked credential

OpenAI key 1 was rotated out-of-band and now returns 401. Bifrost marks key 1 permanently dead for this request and immediately rotates to key 2 (no backoff), which succeeds. Future requests will retry key 1 again — the dead-key set is per-request, not persistent. If every configured key was revoked, Bifrost would return 502 upstream_credentials_exhausted instead of bubbling up the raw 401 (which would falsely suggest the caller’s Bifrost API key is the problem).

Plugin execution

When a fallback is triggered, the fallback request is treated as completely new:- Semantic cache checks run again (the fallback provider may have a cached response)

- Governance rules apply to the new provider

- Logging captures the fallback attempt separately

- All configured plugins execute fresh for each provider in the chain

AllowFallbacks = false on the error, the fallback chain is skipped entirely and the original error is returned immediately.

Next steps

- Keys Management - Configure multiple API keys per provider to enable key rotation on retries

- Governance - Use virtual keys and routing rules to control which providers are used

- Observability - Track retry counts and fallback usage in your logs